Extract Anything from Any PDF: Inside Foxit’s Advanced Extraction Engine

Basic PDF extraction libraries break on scanned documents, complex tables, and form fields, leaving downstream pipelines starved of clean data. Foxit’s PDF Structural Extraction API combines OCR, layout recognition, and AI parsing to return all twelve PDF element types as structured JSON, ready for RAG, BI, and CRM workflows.

Your PDF extraction pipeline passes unit tests against the sample invoices you built it on. Then production arrives and you’re looking at 47% garbled output on the Q4 contract batch because half those documents are scanned TIFFs wrapped in a PDF envelope, and your extraction library has no concept of what an image-only page actually is.

The failure modes are specific. PyMuPDF’s get_text() returns empty strings on scanned PDFs because it reads content streams directly, and image-only pages carry no text stream. pdfplumber’s table detection merges rows when column widths span non-uniform grids, which is standard in any financial statement that mixes summary and line-item rows on the same page. Embedded images containing meaningful text (stamped signatures, engineering drawing annotations, letterhead logos) get silently dropped. The library extracts coordinates for the XObject reference but does nothing with the raster data inside. Form fields built on non-standard annotation types (AcroForms using widget annotations with custom action streams) lose their values entirely when you serialize to text.

The architectural distinction that creates this problem is the difference between content serialization and semantic extraction. A PDF converter reads a content stream and writes out whatever character sequences it finds in rendering order. An extraction engine understands the spatial relationships between those character sequences: that two columns of text at x=72 and x=320 are parallel body copy, that the row at y=210 belongs to the table starting at y=180, that the text block repeating on every page is a header carrying lower retrieval weight in a RAG index. Output that lacks spatial and semantic classification looks correct on screen but breaks every downstream consumer that depends on structure.

BI dashboards require numbers tied to the right row labels. AI ingestion pipelines require heading hierarchy to chunk accurately. CRMs require form field values extracted from AcroForm widget dictionaries, delivered with field names intact. The delta between what basic extraction libraries return and what those systems can actually consume is where document pipeline engineering hours accumulate.

How Foxit’s PDF Structural Extraction Engine Works Under the Hood

Foxit exposes this capability as the PDF Structural Extraction (Trial) endpoint inside the PDF Services API (POST /pdf-services/api/documents/pdf-structural-extract). Trial status means the schema is versioned at v1.0.7 and may evolve, but the contract is stable enough to build against today, and the endpoint runs against the production base URL at developer-api.foxit.com.

The engine runs three coordinated layers. The OCR layer operates on rasterized page content, recognizing characters from image-based PDFs and scanned documents across 200+ languages. The layout recognition layer applies spatial analysis to identify column boundaries, reading order, table cell boundaries, figure regions, and header/footer zones. The AI-based parsing layer classifies extracted objects semantically, resolving ambiguous blocks (a text run that spans two layout columns, or a figure caption that reads syntactically like a section heading) into typed elements.

All three layers run inside Foxit’s core PDF engine, which powers 700 million+ users across 20+ years of production deployments. That engine has native awareness of PDF internal structures: content streams, XObject dictionaries, AcroForm field trees, and annotation layers. The OCR layer operates on the same internal page representation the rendering engine uses, so it handles annotated PDFs where text overlaps image regions, and form fields where the visual display and stored value diverge.

The same Structural Extraction endpoint is also Step 1 of Foxit’s PDF Translation (Trial) workflow, which signals that the extraction output is structured enough to backbone a full rewrite-and-rerender pipeline.

NVIDIA’s July 2025 NeMo Retriever research on PDF extraction showed that specialized OCR-based pipelines outperform general-purpose vision-language models on retrieval recall and throughput for complex elements including tables, charts, and infographics. VLMs produce plausible-looking output on clean documents but degrade on exactly the edge cases (multi-column scans, mixed-content pages, annotated overlays) that a specialized pipeline handles systematically.

The Full Object Map: All 12 Extractable PDF Element Types

The Structural Extraction schema v1.0.7 defines twelve element types in the type enum: title, head, paragraph, table, image, headerFooter, form, hyperlink, footnote, sidebar, annotation, and formula.

The API exposes no per-object filter parameters. The only request body fields are documentId (required) and password (optional, for protected PDFs). The engine extracts the full element graph and returns everything in one asynchronous round-trip. You filter client-side on the returned JSON. The design is correct for the workload because partial extraction would require re-running layout recognition per request, costing more compute than transmitting the full element set in a single ZIP.

The result is a ZIP archive. At minimum it contains StructureInfo.json, whose top-level analyzeResult object holds version, pages, elements, and info. Documents that contain figures or tables also produce additional binary files (image renditions and table renditions) alongside the JSON, referenced from individual elements so the JSON payload stays manageable on large documents.

Each element in the document-wide flat elements array carries its own id, type, content, region (with page and an 8-point boundingBox polygon), and score confidence value. A table element adds its cell grid. A form element adds field data. An image element points to its binary file in the ZIP. Because title, head, and paragraph elements appear in document reading order in the elements array, they chunk cleanly on semantically correct boundaries, which is what a RAG index needs to return complete, coherent passages.

Each type maps directly to a downstream use case: table feeds financial reporting pipelines, form drives automated CRM data entry, image routes to computer vision workflows or document archives, annotation builds compliance audit trails, and head combined with paragraph elements in reading order feeds RAG ingestion.

API Walkthrough: The Four-Step Async PDF Extraction Flow

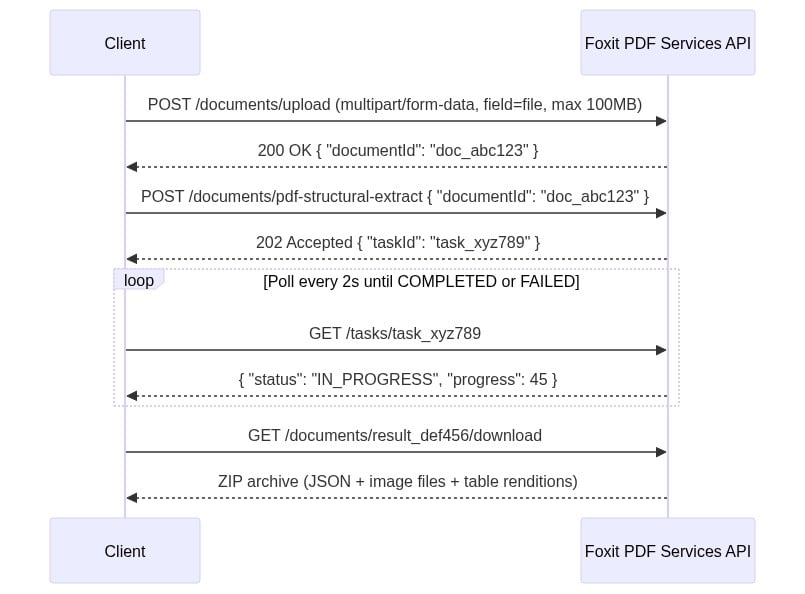

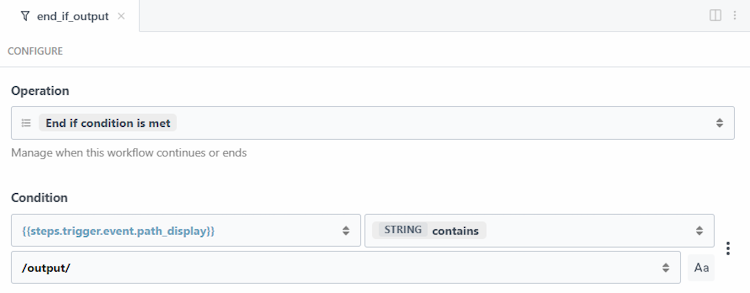

There’s no synchronous path. You upload, get a task ID, poll until completion, then download the result ZIP. Every request carries two headers: client_id and client_secret (lowercase snake_case, as specified in the API spec’s security schemes). Both come from the Developer Portal’s default application. Pass them as named HTTP headers on every request and do not use Authorization: Bearer.

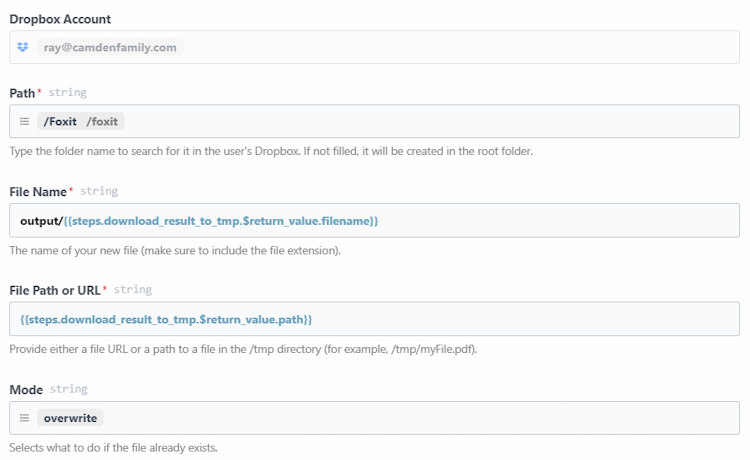

The four-step sequence runs as follows:

The four-step sequence diagram uses two headers on every request: client_id and client_secret. Create a free developer account at account.foxit.com/site/sign-up (no credit card required, no sales call). Once you’re in, the credentials live under the default application in the Developer Portal. Copy the Client ID and Client Secret pair and treat them like any other API secret. Pass them as named HTTP headers on every call (lowercase snake_case, not Authorization: Bearer).

Step 1: Upload the PDF to

POST /pdf-services/api/documents/uploadasmultipart/form-datawith the file under field namefile. The 100MB ceiling is enforced with a413and error codeMAX_UPLOAD_SIZE_EXCEEDED. The response body returns{ "documentId": "doc_abc123" }.Step 2: Starts extraction with

POST /pdf-services/api/documents/pdf-structural-extract, passing{ "documentId": "doc_abc123" }. Add a"password"field for protected PDFs. The response is202 Acceptedwith{ "taskId": "task_xyz789" }.Step 3: Polls

GET /pdf-services/api/tasks/{task-id}. TheTaskResponsecarriestaskId,status,progress(0-100 integer),resultDocumentId, and an optionalerrorobject. Thestatusenum values arePENDING,IN_PROGRESS,COMPLETED, andFAILED. Portal narrative copy occasionally uses “PROCESSING,” but the schema enum value isIN_PROGRESS. Match your code against the enum. Poll untilCOMPLETEDand captureresultDocumentId.Step 4: Downloads with

GET /pdf-services/api/documents/{resultDocumentId}/download, which streams the ZIP archive. The optionalfilenamequery parameter overrides the default filename.

The complete cURL sequence for all four steps:

# Step 1: Upload

curl -X POST "https://na1.fusion.foxit.com/pdf-services/api/documents/upload" \

-H "client_id: YOUR_CLIENT_ID" \

-H "client_secret: YOUR_CLIENT_SECRET" \

-F "file=@invoice_batch.pdf"

# {"documentId":"doc_abc123"}

# Step 2: Start extraction

curl -X POST "https://na1.fusion.foxit.com/pdf-services/api/documents/pdf-structural-extract" \

-H "client_id: YOUR_CLIENT_ID" \

-H "client_secret: YOUR_CLIENT_SECRET" \

-H "Content-Type: application/json" \

-d '{"documentId":"doc_abc123"}'

# 202 Accepted: {"taskId":"task_xyz789"}

# Step 3: Poll task status

curl "https://na1.fusion.foxit.com/pdf-services/api/tasks/task_xyz789" \

-H "client_id: YOUR_CLIENT_ID" \

-H "client_secret: YOUR_CLIENT_SECRET"

# {"taskId":"task_xyz789","status":"COMPLETED","progress":100,"resultDocumentId":"result_def456"}

# Step 4: Download the result ZIP

curl "https://na1.fusion.foxit.com/pdf-services/api/documents/result_def456/download" \

-H "client_id: YOUR_CLIENT_ID" \

-H "client_secret: YOUR_CLIENT_SECRET" \

-o extraction_result.zipThe Python version with a polling loop and ZIP parsing:

import requests, json, time, zipfile

BASE_URL = "https://na1.fusion.foxit.com/pdf-services/api"

HEADERS = {"client_id": "YOUR_CLIENT_ID", "client_secret": "YOUR_CLIENT_SECRET"}

# Step 1: Upload

with open("invoice_batch.pdf", "rb") as f:

doc_id = requests.post(

f"{BASE_URL}/documents/upload", headers=HEADERS, files={"file": f}

).json()["documentId"]

# Step 2: Start extraction

task_id = requests.post(

f"{BASE_URL}/documents/pdf-structural-extract",

headers={**HEADERS, "Content-Type": "application/json"},

json={"documentId": doc_id},

).json()["taskId"]

# Step 3: Poll until COMPLETED or FAILED

while True:

task = requests.get(f"{BASE_URL}/tasks/{task_id}", headers=HEADERS).json()

if task["status"] == "COMPLETED":

result_doc_id = task["resultDocumentId"]

break

if task["status"] == "FAILED":

raise RuntimeError(f"Extraction failed: {task.get('error')}")

time.sleep(2)

# Step 4: Download the result ZIP and save it locally for inspection,

# then parse StructureInfo.json from the saved file

response = requests.get(

f"{BASE_URL}/documents/{result_doc_id}/download", headers=HEADERS

)

with open("advanced-extraction-result.zip", "wb") as f:

f.write(response.content)

with zipfile.ZipFile("advanced-extraction-result.zip") as zf:

json_name = next(n for n in zf.namelist() if n.endswith("StructureInfo.json"))

result = json.loads(zf.read(json_name))["analyzeResult"]

print(f"Schema: {result['version']['schema']}, Elements: {len(result['elements'])}")

On a clean run you should see output like Schema: 1.0.7, Elements: 9 for a small invoice batch. You’ll also find a fresh advanced-extraction-result.zip next to your script. That ZIP holds the full API response, including StructureInfo.json and any rendered image or table binaries, so you can inspect everything the engine returned and not just the parsed JSON.

First, set up and activate a Python virtual environment in your project folder. The official venv guide covers the exact commands for macOS, Linux, and Windows.

Once the virtualenv is active, the sample only needs one third-party package. Drop this into a requirements.txt next to your script and install it with pip install -r requirements.txt:

requests>=2.31.0

If you’re on macOS, use Homebrew Python (brew install python) rather than the system Python from the Xcode command-line tools. The Xcode build is linked against LibreSSL, which is enough to make a correct sample fail.

The ZIP contains a StructureInfo.json file whose top-level object wraps everything under analyzeResult. Inside that wrapper you get a version object, a pages array, a flat elements array, and an info block with analysis metadata. Each element carries its own id, type, content, region (with page and an 8-point boundingBox polygon [x1,y1,x2,y2,x3,y3,x4,y4]), and a score confidence value:

{

"analyzeResult": {

"version": {

"schema": "1.0.7",

"software": "FoxitPDFAnalyzer",

"model": "idp-analysis"

},

"pages": [

{

"pageNumber": 1,

"size": { "width": 612, "height": 792, "unit": "point" },

"state": "success"

}

],

"elements": [

{

"id": "title1",

"type": "title",

"content": {

"text": "Q3 Revenue Summary",

"style": {

"fontName": "Helvetica",

"fontSize": 24.0,

"fontWeight": 0,

"fontItalic": false

}

},

"region": {

"page": 1,

"boundingBox": [72, 47, 317, 47, 317, 80, 72, 80]

},

"score": 0.76

}

],

"info": {

"basicInfo": {

"softwareVersion": "1.6.0",

"analyzedPageCount": 1,

"elementCounts": { "title": 1 }

},

"extendedMetadata": {

"pageCount": 1,

"isEncrypted": false,

"hasAcroform": false,

"language": "en"

}

}

}

}Elements of type table, image, and form carry additional type-specific payload on top of this base shape, and any rendered image or table binary lands as a sibling file inside the ZIP referenced from the element.

HTTP errors return a standard error envelope:

{ "code": "VALIDATION_ERROR", "message": "documentId is required" }The documented error codes include VALIDATION_ERROR (400), MAX_UPLOAD_SIZE_EXCEEDED (413), DOCUMENT_NOT_FOUND (404), STORAGE_ERROR, and INTERNAL_SERVER_ERROR (500).

Password-protected PDFs that arrive with no password parameter reach the processing stage before failing. That failure surfaces in the task status poll response after status reaches FAILED, so your error handler must inspect the task response body in addition to the HTTP status codes from the initial POST calls:

{

"taskId": "task_xyz789",

"status": "FAILED",

"progress": 0,

"error": {

"code": "INTERNAL_SERVER_ERROR",

"message": "Document is password-protected"

}

}Wiring Extracted PDF Data Into Your Workflow

Pattern 1: AI/RAG pipeline. Filter the flat elements array to title, head, and paragraph types. Chunk by heading hierarchy, iterating over the array in the order the engine returned it (document reading order is preserved across columns and pages). Embed each chunk and index in Pinecone, pgvector, or your vector store of choice. Correct reading order, as provided by the extraction engine, is the prerequisite for accurate RAG retrieval on multi-column and paginated documents. When chunks split mid-thought because a layout detector merged two columns, retrieval recall drops and answer quality follows.

Pattern 2: BI reporting. Filter elements by type == "table" client-side, then convert each table’s cell structure into a pandas DataFrame:

import pandas as pd

# `result` is the `analyzeResult` object loaded from StructureInfo.json

tables = [e for e in result["elements"] if e["type"] == "table"]

for i, tbl in enumerate(tables):

# Cells live at content.body.cells[]. Each cell carries rowIndex,

# columnIndex, and a nested paragraph whose content.text holds the value.

body = tbl["content"]["body"]

grid = [["" for _ in range(body["columnCount"])] for _ in range(body["rowCount"])]

for cell in body.get("cells", []):

text = cell.get("paragraph", {}).get("content", {}).get("text", "")

grid[cell["rowIndex"]][cell["columnIndex"]] = text

df = pd.DataFrame(grid[1:], columns=grid[0]) # first row as header

print(f"Table {i}: {df.shape[0]} rows x {df.shape[1]} cols")

# df.to_gbq("finance.q3_revenue", project_id="your-project") # BigQuery

# df.to_sql("q3_revenue", engine) # Postgres / SnowflakeThe row and column indices from the extraction schema map directly to DataFrame positions, so you get a correctly-structured table with zero manual parsing.

Pattern 3: n8n automation. The four-step flow maps to a chain of HTTP Request nodes in n8n. The first node uploads to POST .../upload and passes documentId through the item. The second sends POST .../pdf-structural-extract and captures taskId. A Loop Over Items construct with an HTTP Request node calling GET .../tasks/{taskId} on a two-second interval checks status until COMPLETED, then routes to the download node. The final HTTP Request node calls GET .../documents/{resultDocumentId}/download, and a Code node using n8n’s binary data helpers unpacks the ZIP and parses the JSON for routing to a Salesforce, HubSpot, Postgres, or Airtable node. The polling requirement makes this a multi-node workflow, but you write zero custom glue code and gain n8n’s built-in error routing and retry handling.

PDF Extraction Tools Compared: Foxit vs. Adobe, Google, Amazon, and Azure

| Tool | Underlying Approach | Ecosystem Lock-in | Handles Scanned PDFs | Pricing Model | Setup Overhead | Status |

|---|---|---|---|---|---|---|

| Foxit Structural Extraction | Proprietary OCR + layout recognition + AI (integrated core engine) | Cloud-agnostic REST API | Yes (dedicated OCR layer) | Subscription, no per-page credits | Low (2 credential headers, 4 REST calls) | Trial (schema v1.0.7) |

| Adobe PDF Extract API | Adobe Sensei ML, reading order + renditions | Adobe Document Services | Yes | Contact sales | Medium (Adobe SDK + ecosystem) | GA |

| Google Document AI | Cloud ML + generative AI, Document Object Model | Google Cloud required | Yes | Per-page pay-as-you-go | Medium-high (GCP + IAM) | GA |

| Amazon Textract | Deep learning OCR, key-value and table extraction | AWS-native | Partial (strong on forms, weaker on complex layouts) | Per-page pay-as-you-go | Medium (AWS + IAM) | GA |

| Azure Document Intelligence | Prebuilt + custom ML models | Azure ecosystem | Yes (prebuilt models) | Per-page + model training costs | High for custom models | GA |

Google Document AI and Azure Document Intelligence win on ecosystem integration if you’re all-in on those clouds. Adobe wins on PDF structural fidelity for workflows already inside the Adobe Document Services ecosystem. Amazon Textract excels on standardized form documents where its pre-trained schema fits the input. These are real advantages, and the comparison is honest only when those contexts are acknowledged.

Foxit’s case is strongest when you need a cloud-agnostic REST API with zero ecosystem dependency, full object coverage across all twelve element types, and enterprise throughput (10 to 10,000+ PDFs/day) with SOC 2, GDPR, and HIPAA compliance built in. The Structural Extraction status is a real trade-off to factor in. The schema at v1.0.7 is callable and stable enough for pipeline integration today, but GA competitors carry a finalized contract. Pin your parser to the version field in the response and you’re insulated from schema evolution.

Your First PDF Extraction API Call, Right Now

Go to developer-api.foxit.com, create a free developer account (no credit card required), and copy your Client ID and Client Secret from the default application. Use the built-in API Playground or import the Postman collection from the Developer Portal to run the four-step sequence: upload a real document (an invoice, a multi-page contract, or a scanned form), call pdf-structural-extract with the returned documentId, poll tasks/{taskId} until COMPLETED, then download via documents/{resultDocumentId}/download.

Unzip the result, open StructureInfo.json, and check three things: analyzeResult.version.schema should report 1.0.7, analyzeResult.elements[] should contain at least one table element and one form element if your source document includes those, and the ZIP root should contain the corresponding binary files for any image-type elements. That verification confirms the full extraction pipeline is wired correctly end-to-end.

The same endpoint pattern scales to enterprise volumes. Increase upload and poll concurrency horizontally and the architecture stays identical, with no schema changes, no infrastructure modifications, and no per-page credit consumption to track.

The engineering gap between what basic extraction libraries return and what downstream systems actually consume is where document pipeline hours accumulate. Structural Extraction closes that gap at the API layer, so the complexity stays in the engine and out of your codebase. Get started at developer-api.foxit.com.

PDF Structural Extraction FAQ

What is PDF structural extraction?

PDF structural extraction is the process of identifying and classifying the semantic elements inside a PDF, such as titles, paragraphs, tables, forms, images, and annotations, rather than just pulling raw text. Foxit’s PDF Structural Extraction API returns twelve distinct element types as structured JSON, preserving spatial relationships, reading order, and table cell grids so downstream systems like RAG pipelines, BI dashboards, and CRMs can consume the data without manual parsing.

Can Foxit's API extract text from scanned PDFs?

Yes. Foxit’s PDF Structural Extraction engine includes a dedicated OCR layer that recognizes characters from image-based and scanned PDFs across 200+ languages. The OCR runs on the same internal page representation as the rendering engine, so it handles edge cases like text overlapping image regions, stamped signatures, and engineering drawing annotations that basic libraries like PyMuPDF silently drop.

How does Foxit's PDF extraction API differ from Adobe, Google Document AI, and Amazon Textract?

Foxit’s API is cloud-agnostic with no ecosystem lock-in, requiring just two credential headers and four REST calls. Adobe PDF Extract requires the Adobe Document Services ecosystem, Google Document AI requires GCP and IAM setup, and Amazon Textract requires AWS infrastructure. Foxit also uses subscription-based pricing without per-page credits, while Google, AWS, and Azure all charge per page.

What PDF elements can Foxit's Structural Extraction API identify?

The API identifies twelve element types: title, head, paragraph, table, image, headerFooter, form, hyperlink, footnote, sidebar, annotation, and formula. Each element returns with its content, an 8-point bounding box polygon, page location, and a confidence score. Tables include full cell grids with row and column indices, forms include field data, and images are extracted as separate binary files inside the result ZIP.

How do I call the Foxit PDF Structural Extraction API?

The API uses a four-step asynchronous flow: upload the PDF via POST /documents/upload to get a documentId, start extraction with POST /documents/pdf-structural-extract, poll GET /tasks/{taskId} every two seconds until status is COMPLETED, then download the result ZIP via GET /documents/{resultDocumentId}/download. Authentication uses two headers, client_id and client_secret, available from the default application in the Foxit Developer Portal.

Is the Foxit PDF Structural Extraction API ready for production use?

The endpoint is currently in Trial status with schema version v1.0.7, meaning the contract is stable but may evolve. It runs on the production base URL at developer-api.foxit.com and is built on Foxit’s core PDF engine, which powers 700 million+ users across 20+ years of deployments. For production pipelines, pin your parser to the version field in the response to insulate against future schema changes.

Foxit MCP Server: Give AI Agents Direct Access to 30+ PDF Tools via Model Context Protocol

Learn how the Foxit MCP Server lets AI agents handle PDF conversion, OCR, merge, signing, and document workflows.

Building a document automation agent with raw REST calls means writing the same boilerplate every time: upload a file, poll for task completion, download the result, handle errors, and manage auth tokens across multiple endpoints. For PDF operations, that loop repeats for every conversion, OCR call, or merge operation in your pipeline. The Foxit PDF API MCP Server collapses those loops into 30+ directly callable tools, with the MCP Server handling upstream REST complexity internally.

This guide covers how the server registers, what it exposes, how Foxit’s eSign and DocGen REST APIs extend the same agent session into signing and document generation workflows, and a concrete four-step workflow you can replicate against your own documents.

MCP Architecture in 90 Seconds

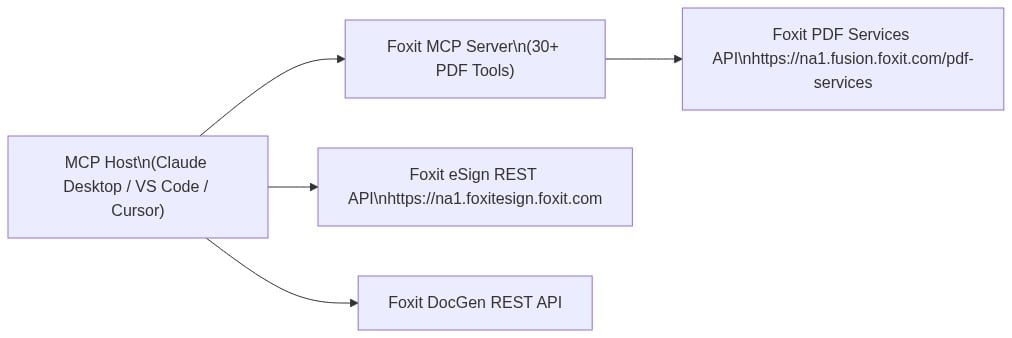

The MCP specification defines three roles. The Host is the LLM runtime (Claude Desktop, VS Code with GitHub Copilot, or Cursor) that manages the conversation and decides when to call tools. The Server is the capability provider, a process that advertises tools over the MCP protocol and executes them against some underlying service. Tools are the individual callable operations each server exposes, defined by a JSON schema the host uses to understand inputs and outputs.

Foxit occupies both sides of this architecture. Foxit PDF Editor ships as an MCP Host, the first PDF application to do so, connecting outward to external MCP servers like Gmail or Salesforce so its AI assistant can reach those services. The Foxit PDF API MCP Server works in the other direction, exposing Foxit’s cloud PDF Services API as 30+ tools for any MCP Host to call.

The MCP Server exposes PDF Services operations: conversion between formats, content extraction, OCR, merge, split, compress, flatten, linearize, compare, watermark, form data import/export, security, and property inspection. Foxit’s eSign API and DocGen API are separate REST services that a single agent session can also reach. The MCP tools handle PDF processing, while direct HTTP calls to eSign and DocGen handle signing and template generation.

Prerequisites and Configuration

You need three things before registering the server:

- A Foxit developer account (free plan at developer-api.foxit.com, no credit card required) to obtain a

client_idandclient_secret - Python 3.11+ with the

uvpackage manager (or Node.js 18+ withpnpmfor the TypeScript version) - An MCP-compatible host such as VS Code with GitHub Copilot, Claude Desktop, or Cursor

Clone the repo from github.com/foxitsoftware/foxit-pdf-api-mcp-server, then register it in your host’s MCP config. For VS Code with GitHub Copilot, add the following to .vscode/mcp.json:

{

"servers": {

"foxit-pdf": {

"command": "uv",

"args": [

"--directory",

"/path/to/foxit-pdf-api-mcp-server",

"run",

"foxit-pdf-api-mcp-server"

],

"env": {

"FOXIT_CLOUD_API_HOST": "https://na1.fusion.foxit.com/pdf-services",

"FOXIT_CLOUD_API_CLIENT_ID": "your_client_id",

"FOXIT_CLOUD_API_CLIENT_SECRET": "your_client_secret"

}

}

}

}For Claude Desktop, the same three environment variables go into the env block of your claude_desktop_config.json under the mcpServers key, with command and args matching the structure above.

Set FOXIT_CLOUD_API_CLIENT_ID and FOXIT_CLOUD_API_CLIENT_SECRET as environment variables on your system before the host process launches. Passing credentials through prompt context is a security risk your production setup should address. The client_id and client_secret from your developer portal authenticate all MCP tool calls to the PDF Services API. Adding eSign to the same agent session requires its own OAuth2 token exchange (covered in the next section), keeping the two credential scopes isolated.

Restart your MCP host after saving the config. The server advertises all tools to the host on connection, so your agent can inspect available operations before invoking any.

PDF Services MCP Tools: Full Catalog

The 30+ tools organize into seven functional categories. Most tools expect a documentId returned by a prior upload_document call, and return a resultDocumentId you pass to download_document when you want the output locally. The exception is pdf_from_url, which accepts a URL directly.

Document Lifecycle

upload_document: upload a PDF, Office file, image, HTML file, or plain text file; returns adocumentIdfor subsequent operationsdownload_document: retrieve a processed result to a local file pathdelete_document: clean up stored files from cloud storage

PDF Creation (file to PDF)

pdf_from_word,pdf_from_excel,pdf_from_ppt: convert Office documents to PDFpdf_from_text,pdf_from_image,pdf_from_html: convert plaintext, image files, or HTML to PDFpdf_from_url: fetch a live URL and convert the rendered page to PDF

PDF Conversion (PDF to file)

pdf_to_word,pdf_to_excel,pdf_to_ppt: extract editable Office formats from a PDFpdf_to_text,pdf_to_html,pdf_to_image: export text, HTML, or image representations

Manipulation

pdf_merge: combine multiple PDFs into onepdf_split: split by page ranges, page count, or every page individuallypdf_extract: pull a subset of pages from a PDFpdf_compress: reduce file size by 30-70% depending on content typepdf_flatten: convert form fields and annotations to static content (required for compliance archiving workflows)pdf_linearize: optimize for Fast Web View so browsers can stream PDF pages incrementallypdf_watermark: apply text or image watermarks with configurable position, opacity, and rotationpdf_manipulate: rotate, delete, or reorder pages

Analysis

pdf_compare: diff two PDFs and return a color-coded annotation document showing changespdf_ocr: convert scanned or image-based PDFs to searchable text with multi-language supportpdf_structural_analysis: extract layouts, tables, images, form fields, metadata, and text as structured JSON

Security and Forms

pdf_protect: add password protection with 128-bit or 256-bit AES encryption and granular permission flagspdf_remove_password: strip password protection from a documentexport_pdf_form_data: extract form field values as JSONimport_pdf_form_data: populate form fields from a JSON payload

Properties

get_pdf_properties: return page count, page dimensions, PDF version, encryption status, digital signature info, embedded files, font inventory, and document metadata

The most-used operation in production document pipelines is pdf_from_word. Your agent uploads a DOCX file, gets back a documentId, then calls pdf_from_word with that ID. The underlying PDF Services API runs the conversion asynchronously, but the MCP Server handles polling internally and delivers the final result directly to your agent.

MCP tool call:

{

"name": "pdf_from_word",

"input": {

"documentId": "doc_abc123"

}

}{

"success": true,

"taskId": "task_xyz789",

"resultDocumentId": "doc_result456",

"message": "Word document converted to PDF successfully. Download using documentId: doc_result456"

}Pass doc_result456 to download_document to write the output PDF to disk, or feed it directly into another tool call like pdf_structural_analysis or pdf_compress as the next step in a chain.

Extending to eSign: Foxit’s Signing API as a Complementary REST Layer

After PDF processing via MCP tools, your agent can dispatch a document for signature by calling Foxit’s eSign REST API directly. The eSign API lives at https://na1.foxitesign.foxit.com with regional variants for EU (eu1.foxitesign.foxit.com), Canada (na2.foxitesign.foxit.com), and Australia (au1.foxitesign.foxit.com). These are direct HTTP calls from your agent to the eSign endpoints, coordinated alongside MCP tool calls in the same session.

Authentication uses OAuth2 client_credentials. The eSign token exchange is a distinct flow from the PDF Services header auth that backs your MCP tools:

import requests

resp = requests.post(

"https://na1.foxitesign.foxit.com/api/oauth2/access_token",

data={

"client_id": ESIGN_CLIENT_ID,

"client_secret": ESIGN_CLIENT_SECRET,

"grant_type": "client_credentials",

"scope": "read-write"

}

)

access_token = resp.json()["access_token"]The Foxit eSign API developer guide uses “folder” terminology throughout. The key endpoints in an automated signing flow are:

POST /folders/createfolder: create a signing folder from one or more PDF documents, define signers, subject, and messagePOST /folders/sendDraftFolder: dispatch the folder to signersPOST /templates/createtemplate: instantiate a folder from a saved template with pre-placed signature fieldsGET /folders/getFolderHistory: retrieve the full activity audit trail for a folder- Webhook channels for status callbacks: register a callback URL to receive real-time events when signers view, sign, or decline

A createfolder call takes the PDF output from your MCP pipeline, uploaded to eSign’s document storage after download_document retrieves it, and sets up the signing workflow:

POST /api/folders/createfolder

Authorization: Bearer {access_token}

Content-Type: application/json{

"folderName": "Acme Corp Contract - Q3 2025",

"sendNow": false,

"fileUrls": [

"https://your-storage.example.com/acme_contract_final.pdf"

],

"fileNames": [

"acme_contract_final.pdf"

],

"parties": [

{

"firstName": "John",

"lastName": "Smith",

"emailId": "[email protected]",

"permission": "FILL_FIELDS_AND_SIGN",

"sequence": 1

}

]

}Set sendNow to false to create a draft folder, then dispatch it with a separate call to /folders/sendDraftFolder. Alternatively, set sendNow to true to create and send in a single call. For files not accessible via URL, use base64FileString instead of fileUrls.

Foxit’s eSign API ships with HIPAA, eIDAS, ESIGN Act, UETA, 21 CFR Part 11, FERPA, and FINRA compliance built in. Audit trail records carry signer location, IP address, recipient identity, event timestamp, consent confirmation, security level, and complete folder history. For legal defensibility in regulated industries, capture and store these fields in your own data layer, because relying solely on Foxit’s folder history API for compliance record-keeping introduces a single point of failure in your audit chain.

End-to-End Workflow: AI Agent Automates a Sales Contract

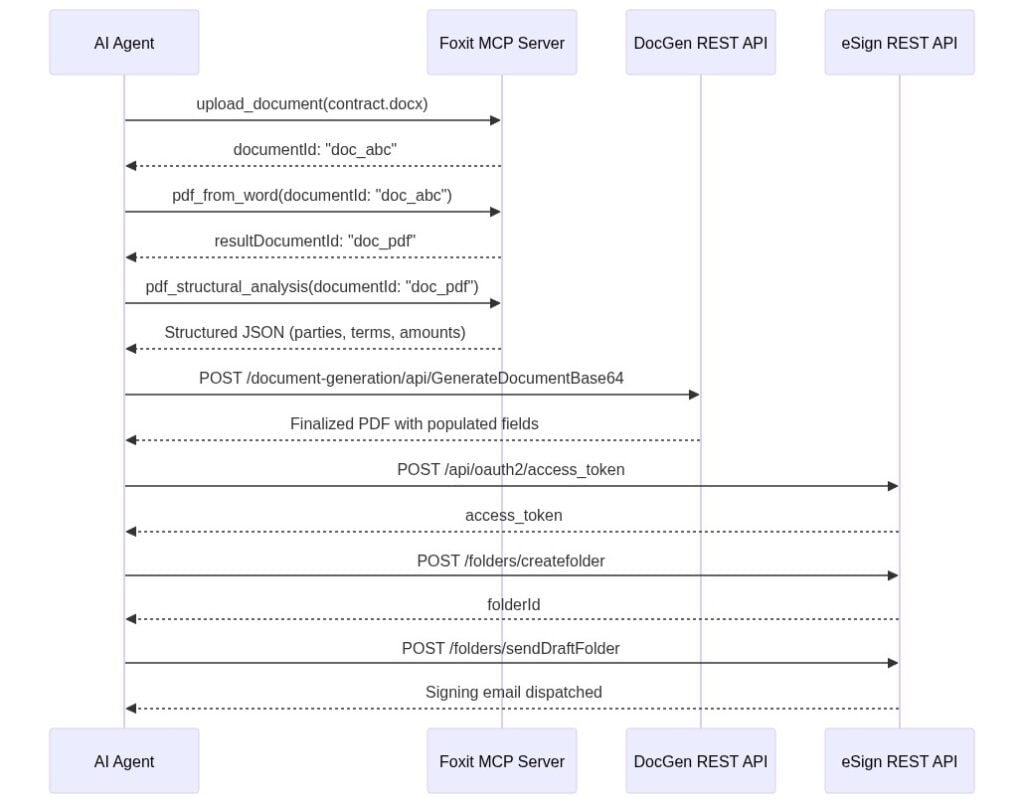

Your sales ops agent receives a natural language instruction: “Generate a contract for Acme Corp, $48,000 ARR, send to [email protected] for signature.” The agent handles every step autonomously. Each call is labeled as either an MCP tool invocation or a direct REST call.

Step 1 uses MCP tool calls. The agent calls upload_document with the DOCX contract template, receives documentId: "doc_abc", then calls pdf_from_word. The MCP Server handles the async conversion and returns resultDocumentId: "doc_pdf" once it completes.

Step 2 uses an MCP tool call. The agent calls pdf_structural_analysis with documentId: "doc_pdf". The tool extracts party names, deal terms, and table data as JSON. The agent validates that required fields are present before proceeding.

Step 3 is a direct REST call to the DocGen API. The agent posts to /document-generation/api/GenerateDocumentBase64 with the validated field values merged into the contract template via {{dynamic_tags}} syntax. DocGen returns the finalized PDF with Acme Corp’s name, the $48,000 ARR figure, and correct dates populated.

Step 4 uses direct REST calls to the eSign API. The agent authenticates via OAuth2, uploads the DocGen output to eSign’s document storage, creates a signing folder via /folders/createfolder with [email protected] as the signer, and dispatches it via /folders/sendDraftFolder.

The LLM selects MCP tools for PDF processing and direct HTTP calls for eSign and DocGen because your system prompt specifies the endpoint contract for each step. The agent chains outputs across both call types, with coordination logic living in the prompt rather than in custom orchestration code you maintain separately.

Production Considerations: Error Handling, Rate Limits, and Data Governance

When you call PDF Services through the MCP Server, async polling happens inside the server process. Your agent receives a final resultDocumentId only after the task completes. When you call the raw PDF Services REST API directly, every operation returns a taskId you poll manually. The pattern below applies exponential backoff with a ceiling of 10 seconds per interval and a 30-second total timeout:

import time, requests

API_HOST = "https://na1.fusion.foxit.com/pdf-services"

auth_headers = {

"client_id": "your_client_id",

"client_secret": "your_client_secret"

}

def poll_task(task_id: str, max_wait: int = 30) -> str:

delay = 1

elapsed = 0

while elapsed < max_wait:

resp = requests.get(

f"{API_HOST}/api/tasks/{task_id}",

headers=auth_headers

)

data = resp.json()

if data["status"] == "COMPLETED":

return data["resultDocumentId"]

time.sleep(delay)

elapsed += delay

delay = min(delay * 2, 10)

raise TimeoutError(f"Task {task_id} timed out after {max_wait}s")The free developer plan at developer-api.foxit.com covers development and testing volumes. Production workloads above the free-tier threshold require a volume plan requested through the Developer Portal.

For data governance, all API traffic runs over TLS 1.2+, and documents at rest use AES-256 encryption. Foxit’s API security documentation covers SOC 2 Type II audit status, HIPAA BAA support, GDPR, CCPA, eIDAS, ESIGN Act, UETA, 21 CFR Part 11, FERPA, and FINRA requirements. Customer data runs in logically segmented environments. For healthcare, legal, or financial services pipelines, confirm your data residency requirements before connecting production document flows, because the eu1, na2, and au1 regional eSign endpoints determine where data is processed.

PDF API MCP Server FAQs

What is the Foxit PDF API MCP Server?

The Foxit PDF API MCP Server is an open-source Model Context Protocol server that exposes Foxit’s cloud PDF Services API as 30+ callable tools. Any MCP-compatible AI agent host, including Claude Desktop, VS Code with GitHub Copilot, and Cursor, can invoke these tools directly.

What PDF operations does the Foxit MCP Server support?

The server supports conversion (Word, Excel, PowerPoint, image, HTML, and URL to PDF and back), OCR, merge, split, extract, compress, flatten, linearize, watermark, compare, form data import/export, password protection, and full document property inspection across seven functional tool categories.

How does the Foxit MCP Server handle authentication?

PDF Services tools authenticate via a client_id and client_secret set as environment variables before the MCP host launches. The eSign API uses a separate OAuth2 client_credentials token exchange against https://na1.foxitesign.foxit.com/api/oauth2/access_token. The two credential scopes are isolated by design.

Does the Foxit MCP Server work with Claude Desktop and VS Code?

Yes. The server registers using a standard mcp.json config block for VS Code with GitHub Copilot or a claude_desktop_config.json block for Claude Desktop. The same config structure works for Cursor. All three hosts discover the server’s tools automatically on connection.

Is the Foxit PDF API MCP Server free to use?

The Foxit developer account is free with no credit card required and covers development and testing volumes. Production workloads above the free-tier threshold require a volume plan through the Developer Portal.

Run Your First Tool Call Now

Getting a working MCP tool call takes under 15 minutes:

Create a free developer account at developer-api.foxit.com (no credit card, instant access). Copy your

client_idandclient_secretfrom the dashboard.Set the three environment variables:

export FOXIT_CLOUD_API_HOST="https://na1.fusion.foxit.com/pdf-services"

export FOXIT_CLOUD_API_CLIENT_ID="your_client_id"

export FOXIT_CLOUD_API_CLIENT_SECRET="your_client_secret"- Clone the repo, register it using the config block from the Prerequisites section, restart your MCP host, and invoke

pdf_from_urlwith any public URL. You’ll have a confirmed PDF output in your working directory. The Developer Portal also includes a live API Playground for validating request payloads against the PDF Services API before wiring them into an agent.

For a full signing workflow, the minimum viable addition to the MCP setup is authenticating against the eSign OAuth2 endpoint and posting to /folders/createfolder with a static PDF. DocGen field population, pdf_structural_analysis validation, and webhook callbacks extend the same pattern incrementally from there.

Get your free API access at developer-api.foxit.com.

Automate Dynamic PDF Generation with the Foxit DocGen API: Word Templates, JSON Data, and Real API Calls

Skip the HTML-to-PDF headaches. Use Foxit’s DocGen API to turn Word templates and JSON data into clean, formatted PDFs with one API call.

If you’ve tried to generate a contract or invoice from HTML, you’ve probably burned hours on page-break-inside: avoid declarations that Chrome renders one way and a headless browser renders another. Headers and footers require separate print-media queries, and by the time you’ve got a repeating table header working correctly across pages, you’ve invested a full day of engineering into CSS that exists solely to trick a browser into behaving like a printer.

HTML documents reflow content into a viewport while PDF documents have fixed page geometry. Forcing one model into the other produces predictable failure modes: footnotes that collide with page footers, tables that split at the worst possible row, custom fonts that substitute silently, and signature blocks that drift off-page on longer documents.

There’s a larger practical cost too. For most teams, the authoritative source for enterprise document templates is already a Word file. Your legal team owns the NDA in .docx format. Finance owns the invoice in .docx format. Every structural change flows through Word because that’s where the tracked changes, formatting history, and review process live. Maintaining a parallel HTML version of each template doubles your maintenance surface from day one.

Foxit’s DocGen API eliminates that parallel entirely. You keep your templates as .docx files, embed data tags directly in Word, POST the base64-encoded template and a JSON payload to a single REST endpoint, and receive the rendered PDF (or DOCX) in the response body. You eliminate the browser rendering engine, the print-media CSS layer, and the overhead of a second template format.

How the Foxit DocGen API Works

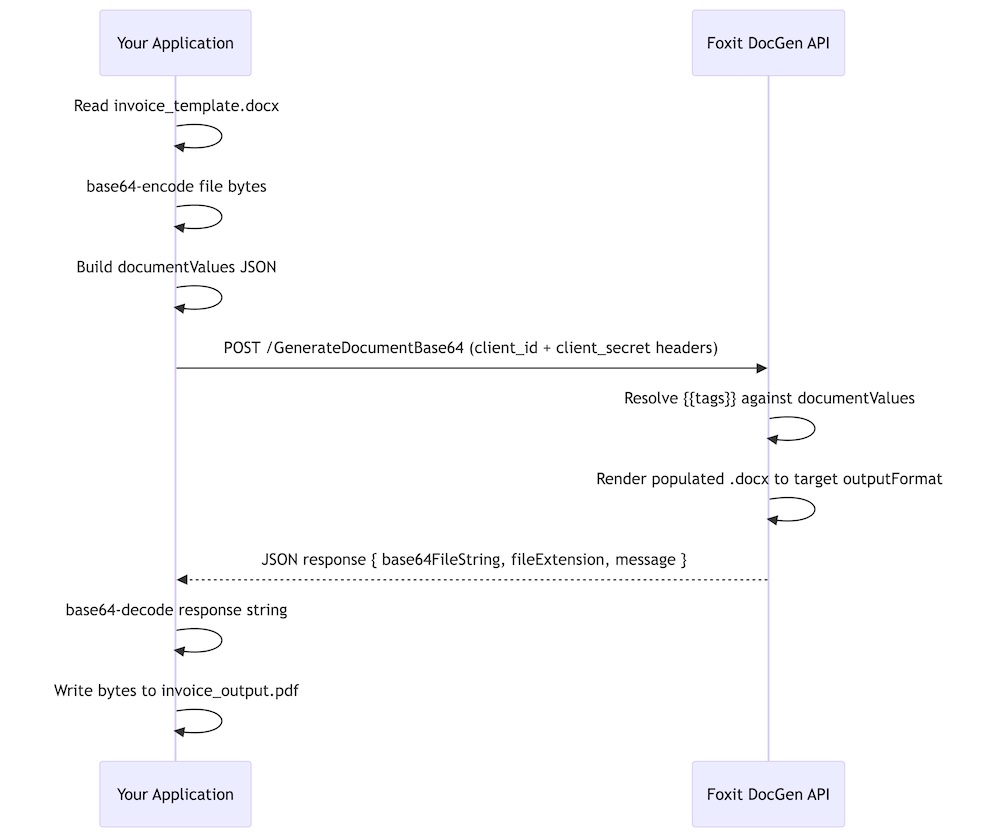

The core model is a single synchronous POST to the GenerateDocumentBase64 endpoint at developer-api.foxit.com. Your request body carries three fields:

base64FileString: your .docx template, base64-encodeddocumentValues: a JSON object containing your merge dataoutputFormat: either"pdf"or"docx"

The API processes the template, resolves every tag against your data, and returns a JSON response containing base64FileString (the rendered document) and a message field confirming success or describing a failure. The exchange is fully synchronous, so you receive the finished document in the same HTTP response with no job ID to poll and no webhook to configure.

Authentication uses two HTTP headers: client_id and client_secret. Both come from the Foxit Developer Portal when you create an account. The free Developer plan provides 500 credits per year with no credit card required, and each GenerateDocumentBase64 call consumes exactly one credit. The Startup plan ($1,750/year) provides 3,500 credits. The Business plan ($4,500/year) covers 150,000 credits for production workloads. For context, Nutrient’s API starts at $75 for 1,000 credits, and Apryse requires a sales conversation before you can access pricing at all.

The complete call flow runs from template file to PDF on disk.

You can explore every endpoint in the live API playground at developer-api.foxit.com, and the portal includes a Postman collection you can import to run authenticated requests without writing a line of code first.

Build a Word Template with DocGen Tags

Open any .docx file in Microsoft Word and type your tags as plain text directly in the document. The DocGen API uses double-brace syntax: {{field_name}}. Tags go anywhere Word accepts text: headings, body paragraphs, table cells, headers, footers, or text boxes.

Scalar field tags resolve directly to the matching key from your documentValues JSON. A document header with {{customer_name}}, {{invoice_number}}, and {{invoice_date}} pulls those three values straight from the top-level keys of your payload.

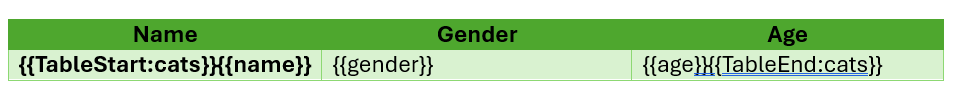

For arrays, you wrap a single table row (the data row, not the header row) with {{TableStart:array_name}} and {{TableEnd:array_name}} markers. The wrapped row acts as a template row, and the API renders one output row per item in the JSON array. An invoice line-items table in Word looks like this:

| Description | Qty | Unit Price | Total |

|---|---|---|---|

{{TableStart:line_items}}{{description}} | {{qty}} | {{unit_price}} | {{total}}{{TableEnd:line_items}} |

Within the array row, ROW_NUMBER auto-increments with each rendered row. A SUM(ABOVE) field placed in the row directly below the {{TableEnd:line_items}} marker calculates a column total across all rendered data rows.

For nested JSON objects, use dot-notation in your tags. A shipping address block references {{shipping.street}}, {{shipping.city}}, and {{shipping.postal_code}}, mapping to properties nested inside a shipping object in your payload. The nesting can go multiple levels deep, so {{customer.address.city}} resolves against documentValues.customer.address.city.

For a working starting point, grab the downloadable invoice template from the foxit-demo-templates repo. The file is well under the 4 MB upload limit and demonstrates every pattern this article uses: scalar tags, {{TableStart:line_items}} / {{TableEnd:line_items}} with {{ROW_NUMBER}}, currency and date format switches, and subtotal / tax / total fields below the line-items table.

One sizing constraint applies while you build your own template. DocGen rejects uploads larger than 4 MB, so if you embed product photos, scanned letterhead, or full font subsets, compress the images before saving, drop embedded fonts where you can rely on system fonts, or split a large template into smaller per-section templates that you generate and merge separately.

Make Your First API Call: Generate a PDF from JSON

Run a quick pre-flight check before the first call to catch the issues that derail most clean-account run-throughs:

- Account created and

client_id/client_secretcopied from the Developer Portal API Keys section - Sample template saved locally as

invoice_template.docxin the directory you’ll run the script from - Template file size confirmed under 4 MB (

ls -lh invoice_template.docxon macOS or Linux, right-click → Properties on Windows)

With those in place, confirm your credentials work with a cURL call. The Foxit Developer Portal includes a Postman collection for this, but a quick cURL request against the API catches auth issues before any code runs:

curl -X POST "https://na1.fusion.foxit.com/document-generation/api/GenerateDocumentBase64" \

-H "client_id: YOUR_CLIENT_ID" \

-H "client_secret: YOUR_CLIENT_SECRET" \

-H "Content-Type: application/json" \

-d '{"base64FileString":"","documentValues":{},"outputFormat":"pdf"}'A 401 here means invalid credentials. A 400 with a message about the template confirms your headers are accepted and you can proceed to the full call.

Save your .docx template as invoice_template.docx in the same directory as this script, then run the complete generation:

import requests

import base64

CLIENT_ID = "your_client_id"

CLIENT_SECRET = "your_client_secret"

API_URL = "https://na1.fusion.foxit.com/document-generation/api/GenerateDocumentBase64"

# Read and encode the template

with open("invoice_template.docx", "rb") as f:

template_b64 = base64.b64encode(f.read()).decode("utf-8")

# Build the data payload

document_values = {

"customer_name": "Acme Corporation",

"invoice_number": "INV-2025-0042",

"invoice_date": "07/15/2025",

"due_date": "08/14/2025",

"line_items": [

{

"description": "API Integration Consulting",

"qty": 8,

"unit_price": 195.00,

"total": 1560.00

},

{

"description": "Document Automation Setup",

"qty": 1,

"unit_price": 750.00,

"total": 750.00

}

],

"subtotal": 2310.00,

"tax_rate": 0.08,

"tax_amount": 184.80,

"total_due": 2494.80

}

# Construct the request body

payload = {

"base64FileString": template_b64,

"documentValues": document_values,

"outputFormat": "pdf"

}

headers = {

"client_id": CLIENT_ID,

"client_secret": CLIENT_SECRET,

"Content-Type": "application/json"

}

response = requests.post(API_URL, json=payload, headers=headers)

if response.status_code == 200:

result = response.json()

pdf_bytes = base64.b64decode(result["base64FileString"])

if pdf_bytes[:5] != b"%PDF-":

raise ValueError("Response did not contain a valid PDF")

with open("invoice_output.pdf", "wb") as out:

out.write(pdf_bytes)

print("PDF written to invoice_output.pdf")

else:

print(f"Error {response.status_code}: {response.json().get('message')}")The success response is a JSON object with three keys: base64FileString (the rendered PDF, base64-encoded), fileExtension ("pdf"), and message ("PDF Document Generated Successfully"). Decoding and writing the bytes to disk gives you a complete, formatted PDF with every tag replaced by its corresponding data value. If you omit a key from documentValues, the API renders the corresponding tag as an empty string, producing a blank field in the output.

Advanced Data Scenarios: Arrays, Nested Objects, and Built-In Functions

The two-row invoice above works, but most production documents have more complex data shapes. Three patterns cover the majority of real-world cases.

For multi-row tables, the line_items array in the Python snippet above already shows the basic structure. To generate five rows, pass five objects in the array. The Word template row tagged with {{TableStart:line_items}} and {{TableEnd:line_items}} repeats exactly once per array item:

{

"line_items": [

{

"description": "UX Design Review",

"qty": 4,

"unit_price": 150.0,

"total": 600.0

},

{

"description": "Backend API Development",

"qty": 12,

"unit_price": 185.0,

"total": 2220.0

},

{

"description": "Database Schema Migration",

"qty": 3,

"unit_price": 200.0,

"total": 600.0

},

{

"description": "QA Testing",

"qty": 6,

"unit_price": 95.0,

"total": 570.0

},

{

"description": "Deployment and Documentation",

"qty": 2,

"unit_price": 175.0,

"total": 350.0

}

]

}The API generates exactly five table rows. Swap in 50 items and you get 50 rows, with page breaks handled by Word’s native pagination logic.

For nested objects, the DocGen API resolves dot-notation paths against the full depth of your JSON structure. A shipping confirmation template referencing {{customer.address.city}} works against this payload without any flattening on your end:

{

"customer": {

"name": "Sarah Chen",

"email": "[email protected]",

"address": {

"street": "742 Evergreen Terrace",

"city": "Portland",

"state": "OR",

"postal_code": "97201"

}

}

}In the Word template, {{customer.name}}, {{customer.address.city}}, and {{customer.address.postal_code}} each resolve to the correct nested value. You can reference the same nested object from multiple locations in the template, and the API populates each instance independently.

For numeric and date formatting, the DocGen API respects Word’s native field switch syntax. Adding \# Currency to a tag formats a numeric value as a currency string, so {{unit_price \# Currency}} renders 195.00 as \$195.00. Date fields accept \@ "MM/dd/yyyy" to control output format, so {{invoice_date \@ "MM/dd/yyyy"}} formats an ISO date string to 07/15/2025. To auto-calculate a column total, place a SUM(ABOVE) field in the Word table row immediately below {{TableEnd:line_items}} and the API evaluates it against the rendered data rows.

Error Handling and Production Readiness

The DocGen API returns a focused set of HTTP status codes. A 200 confirms successful generation. A 401 means your client_id or client_secret headers are invalid, and the fix is to re-copy the credentials from the Developer Portal. A 400 covers three cases. The first is a malformed request body, for example a missing base64FileString or outputFormat. The second is structural issues with the template itself, such as a {{TableStart}} marker placed outside its table row. The third is an oversize template; DocGen rejects .docx uploads larger than 4 MB, and the fix is to compress embedded images, drop embedded fonts, or split the template before re-encoding. The message field in every non-200 response body gives you the specific reason, so log it rather than discarding the response object.

A production wrapper handles all three cases and adds exponential backoff for transient server errors:

import requests

import base64

import time

def generate_document(client_id, client_secret, template_path,

document_values, output_format="pdf"):

API_URL = "https://na1.fusion.foxit.com/document-generation/api/GenerateDocumentBase64"

with open(template_path, "rb") as f:

template_b64 = base64.b64encode(f.read()).decode("utf-8")

payload = {

"base64FileString": template_b64,

"documentValues": document_values,

"outputFormat": output_format

}

headers = {

"client_id": client_id,

"client_secret": client_secret,

"Content-Type": "application/json"

}

max_retries = 3

for attempt in range(max_retries):

try:

response = requests.post(API_URL, json=payload,

headers=headers, timeout=30)

if response.status_code == 200:

return base64.b64decode(response.json()["base64FileString"])

if response.status_code == 401:

raise ValueError("Authentication failed: re-check client_id and client_secret")

if response.status_code == 400:

msg = response.json().get("message", "Bad request")

raise ValueError(f"Request error: {msg}")

if response.status_code >= 500:

if attempt < max_retries - 1:

wait = 2 ** attempt

print(f"Server error ({response.status_code}), retrying in {wait}s...")

time.sleep(wait)

continue

raise RuntimeError(f"Server error after {max_retries} attempts")

except requests.exceptions.Timeout:

if attempt < max_retries - 1:

time.sleep(2 ** attempt)

continue

raise

raise RuntimeError("Max retries exceeded")The wrapper raises immediately on 4xx responses because retrying a credential error or a malformed request produces the same result. Exponential backoff applies only to 5xx responses and timeouts, where the issue is transient.

Once generate_document() returns raw PDF bytes, routing them downstream takes three lines:

import boto3

s3 = boto3.client("s3")

pdf_bytes = generate_document(CLIENT_ID, CLIENT_SECRET, "invoice_template.docx", document_values)

s3.put_object(Bucket="my-documents-bucket", Key="invoices/INV-2025-0042.pdf", Body=pdf_bytes)To attach the output to an email, pass pdf_bytes directly as the smtplib attachment payload. To collect a signature on the generated document, base64-encode the bytes and POST them to Foxit’s eSign API with the signer’s email address in the request body. The full eSign API reference is at docs.developer-api.foxit.com.

Common Mistakes

A short list of the issues that account for almost every failed first run.

- Smart-quote autocorrect on braces. Word’s AutoCorrect can convert the second

{of{{into a curly-quote glyph, which breaks tag parsing silently. Disable “Straight quotes with smart quotes” under AutoCorrect Options, or paste tags as plain text. - Token case sensitivity.

{{Customer_Name}}and{{customer_name}}are different keys. Match the casing in your JSON exactly. TableStartandTableEndmust sit in the same Word table row. Splitting them across two rows, or placing either marker outside the table, leaves the loop unrendered with no error.- Template over 4 MB. The API rejects oversize uploads with a 400. Compress embedded images, drop embedded fonts where system fonts will do, or split the template into smaller pieces.

- Missing payload key. The API renders an unmatched tag as an empty string rather than failing, so a 200 response does not guarantee every field is populated. Spot-check the rendered PDF as part of any pipeline test.

- Auth header typos. Headers are

client_idandclient_secretin snake_case.Client-Id,ClientId, orX-Client-Idall return 401.

Run the Full Invoice Example End-to-End Right Now

Create a free account directly at account.foxit.com/site/sign-up. This skips the pricing-page redirect you hit from the marketing site and drops you straight into the account form.

- Open account.foxit.com/site/sign-up and complete the form (no credit card required).

- After verification, sign in to the Developer Portal and the Developer plan (500 credits per year) is active by default.

- Open the API Keys section and copy your

client_idandclient_secret.

With credentials in hand, run the example end-to-end:

- Download

invoice_full.docxfrom the foxit-demo-templates repo and save it locally asinvoice_template.docxin your working directory. The file is well under the 4 MB upload limit and exercises every tag pattern this article covers. - Paste your credentials into the

CLIENT_IDandCLIENT_SECRETvariables in the Python script from the previous section. - Edit the

document_valuesdictionary with your own customer name, invoice number, and line items. - Run the script and open

invoice_output.pdf.

The free Developer plan’s 500 annual credits cover this tutorial dozens of times over before you spend anything. The full API reference at docs.developer-api.foxit.com covers every endpoint parameter, the complete tag specification, all supported output formats, and the full GenerateDocumentBase64 request and response schema.

Get started with a free account (no credit card required) and generate your first dynamic PDF in under 10 minutes.

Generate Dynamic PDFs from JSON using Foxit APIs

See how easy it is to generate PDFs from JSON using Foxit’s Document Generation API. With Word as your template engine, you can dynamically build invoices, offer letters, and agreements—no complex setup required. This tutorial walks through the full process in Python and highlights the flexibility of token-based document creation.

Generate Dynamic PDFs from JSON using Foxit APIs

One of the more fascinating APIs in our library is the Document Generation API. This document generation API lets you create dynamic PDFs or Word documents using your own data as templates. That may sound simple – and the code you’re about to see is indeed simple – but the real power lies in how flexible Word can be as a template engine. This API could be used for:

- Creating invoices

- Creating offer letters

- Creating dynamic agreements (which can integrate with our eSign API)

All of this is made available via a simple API and a “token language” you’ll use within Word to create your templates. Whether you’re feeding in data from a database, a form submission, or a JSON API response, the process looks the same from your Python script. Let’s take a look at how this is done.

Credentials

Before we go any further, head over to our developer portal and grab a set of free credentials. This will include a client ID and secret values – you’ll need both to make use of the API.

Don’t want to read all of this? You can also follow along by video:

Using the API

The Document Generation API flow is a bit different from our PDF Services APIs in that the execution is synchronous. You don’t need to upload your document beforehand or download a result. You simply call the API (passing your data and template) and the result has your new PDF (or Word document). With it being this simple, let’s get into the code.

Loading Credentials

My script begins by loading in the credentials and API root host via the environment:

CLIENT_ID = os.environ.get('CLIENT_ID')

CLIENT_SECRET = os.environ.get('CLIENT_SECRET')

HOST = os.environ.get('HOST')As always, try to avoid hard coding credentials directly into your code.

Calling the API

The endpoint only requires you to pass the output format, your data, and a base64 version of your file. “Your data” can be almost anything you like—though it should start as an object (i.e., a dictionary in Python with key/value pairs). Beneath that, anything goes: strings, numbers, arrays of objects, and so on.

Here’s a Python wrapper showing this in action:

def docGen(doc, data, id, secret):

headers = {

"client_id":id,

"client_secret":secret

}

body = {

"outputFormat":"pdf",

"documentValues": data,

"base64FileString":doc

}

request = requests.post(f"{HOST}/document-generation/api/GenerateDocumentBase64", json=body, headers=headers)

return request.json()And here’s an example calling it:

with open('../../inputfiles/docgen_sample.docx', 'rb') as file:

bd = file.read()

b64 = base64.b64encode(bd).decode('utf-8')

data = {

"name":"Raymond Camden",

"food": "sushi",

"favoriteMovie": "Star Wars",

"cats": [

{"name":"Elise", "gender":"female", "age":14 },

{"name":"Luna", "gender":"female", "age":13 },

{"name":"Crackers", "gender":"male", "age":13 },

{"name":"Gracie", "gender":"female", "age":12 },

{"name":"Pig", "gender":"female", "age":10 },

{"name":"Zelda", "gender":"female", "age":2 },

{"name":"Wednesday", "gender":"female", "age":1 },

],

}

result = docGen(b64, data, CLIENT_ID, CLIENT_SECRET)You’ll note here that my data is hard-coded. In a real application, this would typically be dynamic—read from the file system, queried from a database, or sourced from any other location.

The result object contains a message representing the success or failure of the operation, the file extension for the result, and the base64 representation of the result. To turn that base64 string back into a file, decode it first:

b64_bytes = result["base64FileString"].encode('ascii')

binary_data = base64.b64decode(b64_bytes)Most likely you’ll always be outputting PDFs, so here’s a simple bit of code that stores the result:

with open('../../output/docgen_sample.pdf', 'wb') as file:

file.write(binary_data)

print('Done and stored to ../../output/docgen_sample.pdf')There’s a bit more to the API than I’ve shown here so be sure to check the docs, but now it’s time for the real star of this API, Word.

Using Word as a Template

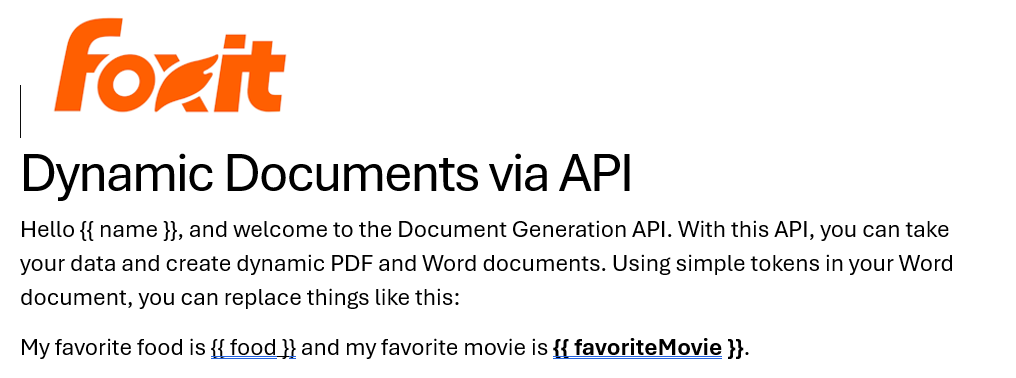

I’ve probably used Microsoft Word for longer than you’ve been alive and I’ve never really thought much about it. But when you begin to think of a simple Word document as a template, all of a sudden the possibilities begin to excite you. In our Document Generation API, the template system works via simple “tokens” in your document marked by opening and closing double brackets.

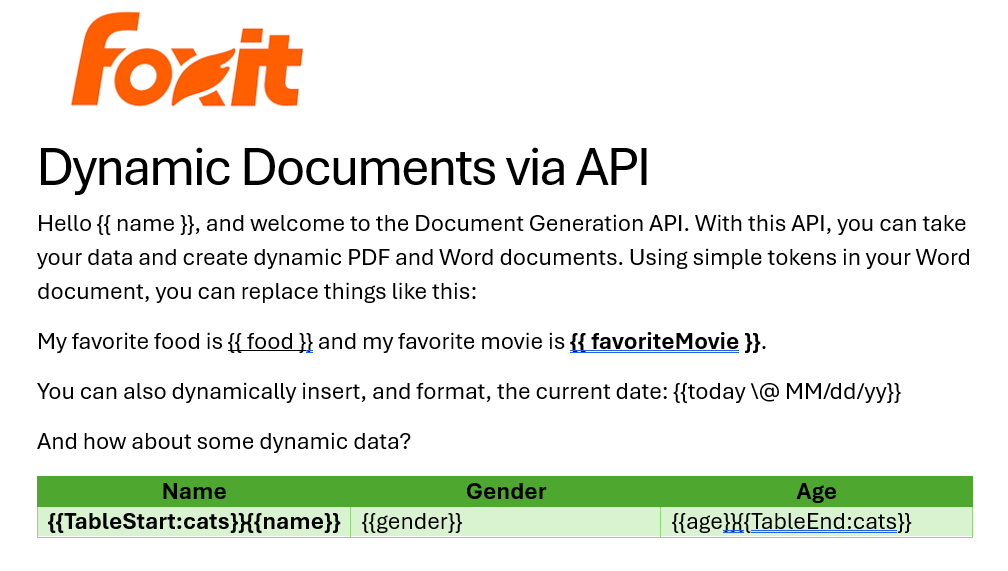

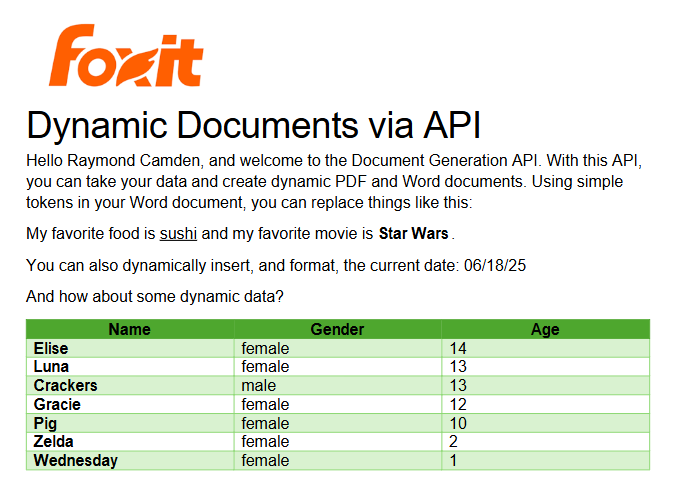

Consider this block of text:

See how name is surrounded by double brackets? And food and favoriteMovie? When this template is sent to the API along with the corresponding values, those tokens are replaced dynamically. In the screenshot, notice how favoriteMovie is bolded. That’s fine. You can use any formatting, styling, or layout options you wish.

That’s one example, but you also get some built-in values as well. For example, including today as a token will insert the current date, and can be paired with date formatting to specify how the date looks:

Remember the array of cats from earlier? You can use that to create a table in Word like this:

Notice that I’ve used two new tags here, TableStart and TableEnd, both of which reference the array, cats. Then in my table cells, I refer to the values from that array. Again, the color you see here is completely arbitrary and was me making use of the entirety of my Word design skills.

Here’s the template as a whole to show you everything in context:

The Result

Given the code shown above with those values, and given the Word template just shared, once passed to the API, the following PDF is created:

What About Converting PDF to JSON?

So far we’ve been going one direction: JSON data in, PDF out. But what if you need to go the other way—extract structured content from a PDF and work with it in your application?

Foxit’s PDF Services API includes an Extract endpoint that handles exactly this. You upload a PDF, specify whether you want TEXT, IMAGE, or PAGE-level data, and the API returns the extracted content. The text output is particularly useful if you want to feed the result into a data pipeline, search index, or AI workflow.

Here’s a quick look at how extraction works in Python. First, upload your PDF:

def uploadDoc(path, id, secret):

headers = {

"client_id":id,

"client_secret":secret

}

with open(path, 'rb') as f:

files = {'file': (path, f)}

request = requests.post(f"{HOST}/pdf-services/api/documents/upload", files=files, headers=headers)

return request.json()

doc = uploadDoc("../../inputfiles/input.pdf", CLIENT_ID, CLIENT_SECRET)Then call the Extract endpoint with the document ID and the type of content you want. The result comes back in a structured format you can parse, store, or pass along to other tools—including an LLM if you’re building an AI document pipeline.

You can read a full walkthrough in our PDF text extraction guide.

Ready to Try?

If this looks cool, be sure to check the docs for more information about the template language and API. Sign up for some free developer credentials and reach out on our developer forums with any questions.

If you’re building AI agents or LLM-powered workflows, Foxit also offers an MCP server that lets you connect your agents directly to Foxit PDF Services—so your AI tools can generate, extract, and process documents without any custom glue code.

Want the code? Get it on GitHub (Python).

If you are more of a Node person, check out that version. Get it on GitHub (Node.js).

Building Auditable, AI-Driven Document Workflows with Foxit APIs

We had an incredible time at API World 2025 connecting with developers, sharing ideas, and seeing how Foxit APIs power everything from AI-driven resume builders to interactive doodle apps. In this post, we’ll walk through the same hands-on workflow Jorge Euceda demoed live on stage—showing how to build an auditable, AI-powered document automation system using Foxit PDF Services and Document Generation APIs.

How to Build an AI Resume Analyzer with Python & Foxit APIs (API World 25′)

This year’s API World was packed with energy—and it was amazing meeting so many developers face-to-face at the Foxit booth. We spent three days trading ideas about document automation, AI workflows, and integration challenges.

Our team hosted a hands-on workshop and sponsored the API World Hackathon, where developers submitted 16 high-quality projects built with Foxit APIs. Submissions ranged from:

Automated legal-advice generators

Compatibility-rating apps that analyze your personality match

AI-powered resume optimizers that tailor your CV to dream-job descriptions

Collaborative doodle games that turn drawings into shareable PDFs

Each project offered a new perspective on what’s possible with Foxit APIs—and we loved seeing the creativity.

Among all the sessions, Jorge Euceda’s workshop stood out as a crowd favorite. It showed how to make AI document decisions auditable, explainable, and replayable using event sourcing and two key Foxit APIs. That’s exactly what we’ll walk through below.

Replicate the Full Demo

Click here to grab the project overview file.

Prefer to follow along with the live session instead of reading step-by-step?

Watch Jorge’s complete “AI-Powered Resume to Report” presentation from API World 2025.

It includes every step shown below—plus real-time API responses.

What You’ll Build

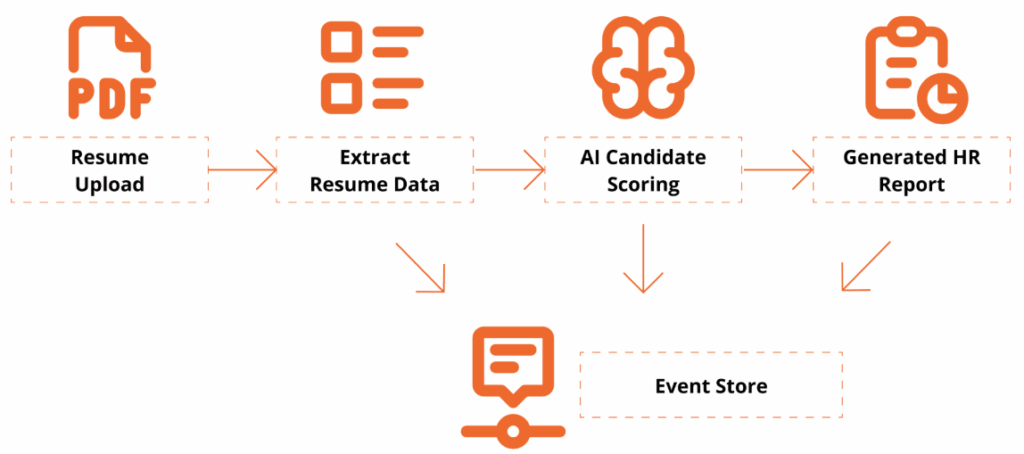

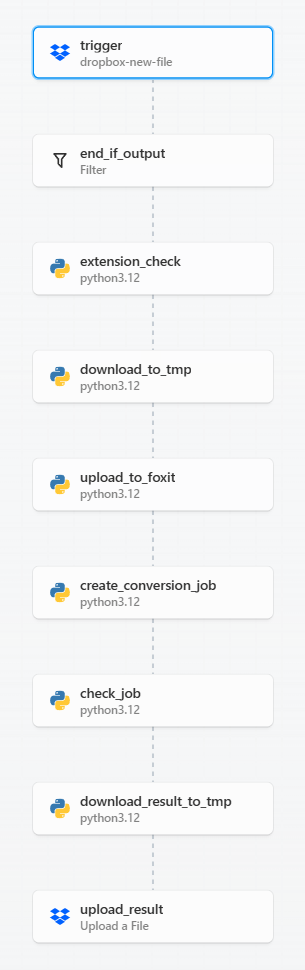

A complete, auditable workflow:

Resume Upload → Extract Resume Data → AI Candidate Scoring → Generate HR Report → Event Store

This workshop is designed for technical professionals and managers who want to learn how to use application programming interfaces (APIs) and explore how AI can enhance document workflows. Attendees will get hands-on experience with Foxit’s PDF Services (extraction/OCR) and Document Generation APIs, and see how event sourcing turns AI decisions into an auditable, replayable ledger.

By the end, you’ll have a Python-based demo that extracts data from a PDF resume, analyzes it against a policy, and generates a polished HR Report PDF with a traceable event log.

Getting Set Up

To follow along, you’ll need:

Access to a terminal with a Python 3.9+ Environment and internet connectivity

Visual Studio Code or your preferred IDE

Basic familiarity with REST/JSON (helpful but not required)

- Install Dependencies

python -V

# virtual environment setup, requests installation

python3 -m venv myenv

source myenv/bin/activate

pip3 install requests- Download the project’s zip file below

Now extract the files somewhere in your computer, open in Visual Studio Code or your preferred IDE.

You may use any sample resume PDF for inputs/input_resume.pdf. A sample one is provided, but you may leverage any resume PDF you wish to generate a report on.

- Create a Foxit Account for credentials

Create a Free Developer Account now or navigate to our getting started guide, which will go over how to create a free trial.

Hands-On Walkthrough

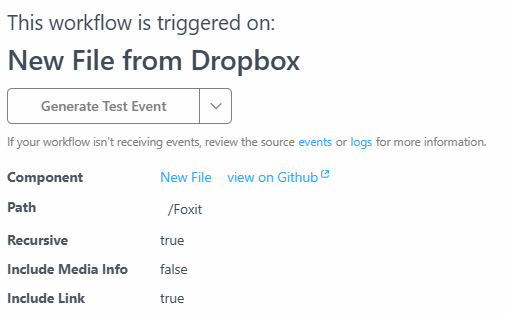

Step 1 – Open the Project

Now that you’ve downloaded the workshop source code, navigate to the resume_to_report.py file, which will serve as our main entry point.

Once dependencies are installed and the ZIP file extracted, open your workspace and run:

python3 resume_to_report.pyYou should see console logs showing:

An AI Report printed as JSON

A generated PDF (

outputs/HR_Report.pdf)An event ledger (

outputs/events.json) with traceable actions

Step 2 — Inspect the outputs

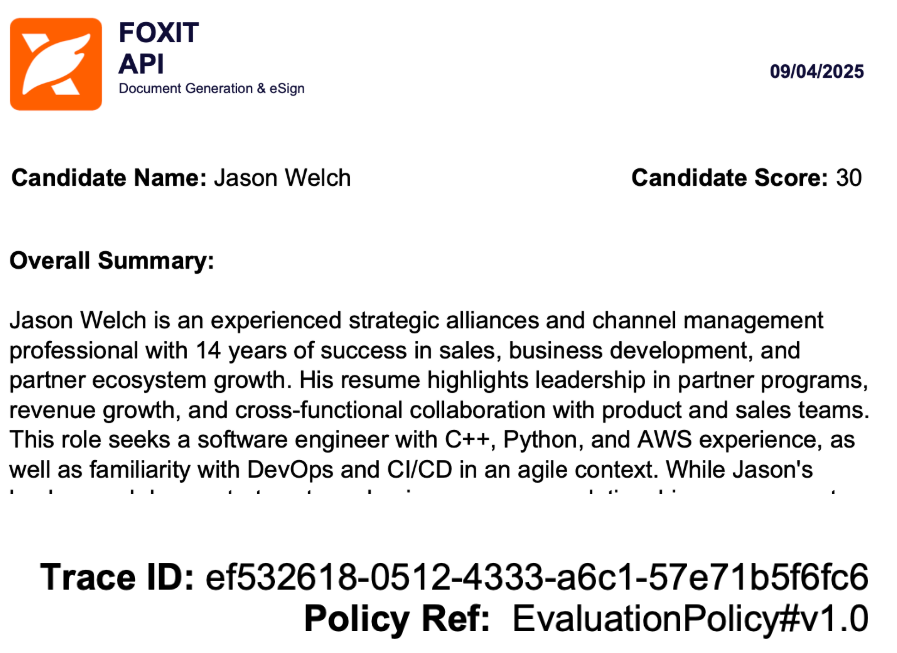

Open the generated HR report to review:

Candidate name and phone

Overall fit score

Matching skills & gaps

Summary and policy reference in the footer

Then open events.json to see your audit trail—each entry captures the AI’s decision context.

{

"eventType": "DecisionProposed",

"traceId": "8d1e4df6-8ac9-4f31-9b3a-841d715c2b1c",

"payload": {

"fitScore": 82,

"policyRef": "EvaluationPolicy#v1.0"

}

}This is your audit trail.

Step 3 — Replay & Explain a Policy Change

Replay demonstrates why event-sourcing matters:

Edit

inputs/evaluation_policy.json: add a hard requirement (e.g.,"kubernetes") or adjust the job_description emphasis.Re-run the script with the same resume.

Compare:

New decision and updated PDF content

Event log now reflects the updated rationale (

PolicyLoadedsnapshot → newDecisionProposedwith the sametraceIdlineage)

Emphasize: The input resume hasn’t changed; only policy did — the event ledger explains the difference.

Policy: Drive Auditable & Replayable Decisions

The AI assistant uses a JSON policy file to control how it scores, caps, and summarizes results. Every policy snapshot is logged as its own event, creating a replayable audit trail for governance and compliance.

{

"policyId": "EvaluationPolicy#v1.0",

"job_description": "Looking for a software engineer with expertise in C++, Python, and AWS cloud services. Experience building scalable applications in agile teams; familiarity with DevOps and CI/CD.",

"overall_summary": "Make the summary as short as possible",

"hard_requirements": ["C++", "python", "aws"]

}Notes:

policyIdappears in both the report and event log.job_descriptiondefines what the AI is looking for.Changing these values creates a new traceable event.

Generate a Polished Report

Next, use the Foxit Document Generation API to fill your Word template and create a formatted PDF report.

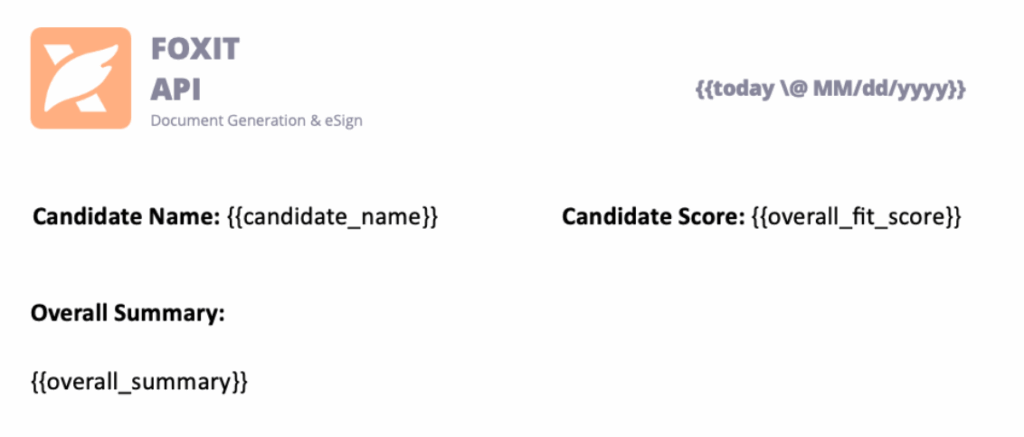

Open inputs/hr_report_template.docx, you will find the following HR reporting template with placeholders for the fields we will be entering:

Tips:

Include lightweight branding (logo/header) to make the generated PDF presentation-ready.

Include a footer with traceable Policy ID and Trace ID Events

Results and Audit Trail

Here’s what the final HR Report PDF looks like:

Every decision has a Trace ID and Policy Ref, so you can recreate the report at any time and verify how the AI arrived there.

Why Event-Sourced AI Matters

This pattern does more than score resumes—it proves that AI decisions can be transparent, deterministic, and trustworthy.

By using Foxit APIs to extract, analyze, and generate documents, developers can bring auditability to any workflow that relies on machine logic.

Key Takeaways

Auditability – Every AI step emits a verifiable event.

Replayability – Change a policy and regenerate for deterministic results.

Explainability – Decisions carry policy and trace references for clear “why.”

Automation – PDF Services and Document Generation handle the document lifecycle end-to-end.

Try It Yourself

Ready to build your own auditable AI workflow?

Demo and Source Code: document-workflows-with-foxit.pages.dev

Foxit Developer Portal: developer-api.foxit.com

API Docs: docs.developer-api.foxit.com

Watch the Full Presentation: Euceda’s API World session

Closing Thought

At API World, we set out to show how Foxit APIs can power real, transparent AI workflows—and the community response was incredible. Whether you’re building for HR, legal, finance, or creative industries, the same pattern applies:

Make your AI explain itself.

Start with the Foxit APIs, experiment with policies, and turn every AI decision into a traceable event that builds trust.

Create Custom Invoices with Word Templates and Foxit Document Generation

Invoicing is a critical part of any business. This tutorial shows how to automate the process by creating dynamic, custom PDF invoices with the Foxit Document Generation API. Learn how to design a Microsoft Word template with special tokens, prepare your data in JSON, and then use a simple Python script to generate your final invoices.

Create Custom Invoices with Word Templates and Foxit Document Generation

Invoicing is a critical part of any business, often involving multiple steps—gathering customer data, calculating amounts owed, and sending out invoices so your company can get paid. Foxit’s Document Generation API streamlines this process by making it easy to create well-formatted, dynamic PDF invoices. Let’s walk through an example.

Before You Start

If you want to follow along with this blog post, be sure to get your free credentials over on our developer portal. Also, read our introductory blog post, which covers the basics of working with our API.

As a reminder, the API makes use of Microsoft Word templates. These templates are essentials tokens wrapped in double brackets. When you call the API, you’ll pass the template and your data. Our API then dynamically replaces those tokens with your data and returns you a nice PDF (you can also get a Word file back as well).

Creating Your Custom Invoice with Word Templates

Let’s begin by designing the template in Word. An invoice typically includes things like:

- The customer receiving the invoice

- The invoice number and issue date

- The payment due date

- A detailed list of items, including name, quantity, and price for each line item, with a total at the end

The Document Generation API makes no requirements in terms of how you design your templates. Size, alignment, and so forth, can match your corporate styles and be as fancy, or simple, as you like. Let’s consider the template below (I’ll link to where you can download this file at the end of the article):

Let's break it down from the top.

- The first token,

{{ invoiceNum }}, represents the invoice number for the customer. - The next token is special.

{{ today \@ MM/dd/yyyy }}represents two different features of the Document Generation API. First,todayis a special value representing the present time, or more accurately, when you call the API. The next portion represents a date mask for representing a date value. Our docs have a list of available masks. {{ accountName }}is another regular token.- The payment date,