Your Puppeteer setup works fine at low volume. You launch a Chrome process, load the page, call page.pdf(), and write the bytes to disk. Clean enough. Then your invoice generation hits 500 documents per night, your report export feature goes live in three time zones simultaneously, and the wheels start coming off. Chrome processes time out waiting for JavaScript hydration. Memory climbs until your container OOMs. The font that renders correctly on your MacBook looks wrong on the Linux build server. You spend a Friday afternoon tuning networkidle2 timeouts per template instead of shipping features.

This is the failure mode of treating a rendering engine as a conversion service. Headless Chrome is a browser. Running it at production document volume means you’re operating a browser fleet: process pooling, memory isolation, crash recovery, rendering consistency across OS environments. That infrastructure overhead comes directly out of engineering time.

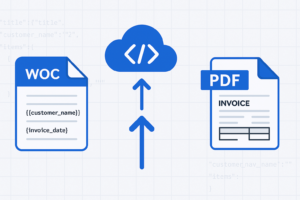

The architectural alternative is a managed REST API: POST your HTML (or a URL), let the service render the PDF, and download the result. The rendering infrastructure becomes the API provider’s problem. This guide covers how to build that conversion pipeline end-to-end using Foxit PDF Services API, from authentication through batch processing and production error handling.

The Production Problem with Headless Browser PDF Conversion

A standard Puppeteer setup looks like this:

const browser = await puppeteer.launch();

const page = await browser.newPage();

await page.goto(url, { waitUntil: "networkidle2" });

const pdf = await page.pdf({ format: "A4", printBackground: true });

await browser.close();

At five documents a day, this is fine. At five hundred concurrent, each puppeteer.launch() spins up a full Chromium process (roughly 100-200MB RSS on Linux). If you’re running in a container with 2GB of memory and you get 20 concurrent requests, you’re at the limit before accounting for the Node.js process itself or any other application memory.

The standard solution is a Chrome process pool (libraries like puppeteer-cluster or generic-pool). Now you’re managing pool size tuning, handling pool exhaustion under burst traffic, and writing cleanup logic for crashed Chrome instances. You’ve added significant operational complexity to what started as a one-liner.

Font rendering is a separate category of pain. Chrome on macOS uses CoreText. Chrome on Linux uses FreeType with fontconfig. The same CSS font-family: 'Inter' declaration produces visibly different output depending on whether Inter is installed as a system font or loaded via a @font-face declaration, and whether the fallback stack resolves differently across environments. Teams that ship invoice PDFs to customers discover this in production, not in development.

JavaScript execution adds another dimension. If your page renders a data table via a React component that fetches data on mount, networkidle2 is not a reliable wait condition. Network activity can go idle before the DOM has finished updating. You end up tuning waitForSelector or adding arbitrary timeouts per template, and those timeouts become technical debt that breaks when the page changes.

The architectural fix isn’t a better Puppeteer wrapper. It’s offloading the entire rendering layer to a service that was built to handle it reliably: a managed REST API with consistent rendering environments, predictable behavior, and no infrastructure for your team to maintain.

How Cloud HTML-to-PDF APIs Handle Rendering

Cloud conversion APIs typically accept input in two modes: URL mode and file upload mode.

In URL mode, you pass a public URL. The API fetches the page, renders it, and returns a PDF. This works when your page is publicly accessible and all assets (fonts, images, stylesheets) load from the same domain or CDN. The tradeoff is that the API’s rendering environment must reach your server, which creates a dependency on network reachability and your server’s response time. If you’re generating PDFs from an internal dashboard behind a VPN, URL mode doesn’t work without additional networking.

In file upload mode, you construct the complete HTML file (with inlined CSS and assets where needed) and upload it to the API. The service processes the file and returns a PDF. This eliminates the external asset dependency and makes your conversion more deterministic: the same HTML file always produces the same PDF, regardless of what’s deployed on your web server at the time.

Beyond input mode, rendering fidelity depends on several factors:

- CSS

@media printrules control what renders into the PDF. Navigation bars, sidebars, and hover states should be hidden via print stylesheets so they don’t appear in the output. - Font loading strategy determines rendering consistency. Relying on system fonts produces different output across environments. Embedding fonts via

@font-facewith a CDN URL or base64-inlined data guarantees consistent rendering. - Page layout properties (paper size, margins, orientation) can be controlled through CSS

@pagerules embedded in the HTML itself. This keeps layout configuration in the document rather than in API parameters. - JavaScript execution matters for pages that render content dynamically. Some APIs wait for the page to stabilize before capturing; others capture immediately.

These factors are the same ones you’d manage with Puppeteer’s page.pdf() options, but with a cloud API you handle them through your HTML/CSS rather than through in-process code.

Setting Up Foxit PDF Services API: Authentication and First Conversion

Foxit PDF Services API is a cloud-hosted REST API built on Foxit’s proprietary PDF engine, backed by over 20 years of PDF technology development. Create an account at the Foxit Developer Portal (the Developer plan is free, includes 500 credits/year, and requires no credit card). Generate your API credentials (a client_id and client_secret) from the Developer Dashboard.

Understanding the Async Workflow

Unlike a simple request-response API, Foxit PDF Services uses an asynchronous task-based workflow. Every operation follows the same pattern:

- Submit the job (upload a file, or POST a URL)

- Receive a

taskIdin the response - Poll the task status until it completes or fails

- Download the result using the

resultDocumentIdfrom the completed task

This design handles long-running operations gracefully. A complex HTML page might take several seconds to render; the async pattern means your client never blocks on a single HTTP request waiting for rendering to finish.

URL-to-PDF Conversion

For pages that are publicly accessible, URL-to-PDF is the simplest path. You POST the URL directly and the API fetches, renders, and converts it. Here’s the complete workflow in Python using the requests library:

import os

import requests

from time import sleep

HOST = os.environ["FOXIT_API_HOST"] # e.g., https://na1.fusion.foxit.com

CLIENT_ID = os.environ["FOXIT_CLIENT_ID"]

CLIENT_SECRET = os.environ["FOXIT_CLIENT_SECRET"]

AUTH_HEADERS = {

"client_id": CLIENT_ID,

"client_secret": CLIENT_SECRET,

}

def create_url_to_pdf_task(url: str) -> str:

"""Submit a URL for PDF conversion. Returns a taskId."""

headers = {**AUTH_HEADERS, "Content-Type": "application/json"}

response = requests.post(

f"{HOST}/pdf-services/api/documents/create/pdf-from-url",

json={"url": url},

headers=headers,

)

response.raise_for_status()

return response.json()["taskId"]

def poll_task(task_id: str, interval: int = 5) -> dict:

"""Poll until the task completes or fails. Returns the task status object."""

headers = {**AUTH_HEADERS, "Content-Type": "application/json"}

while True:

response = requests.get(

f"{HOST}/pdf-services/api/tasks/{task_id}",

headers=headers,

)

response.raise_for_status()

status = response.json()

if status["status"] == "COMPLETED":

return status

elif status["status"] == "FAILED":

raise RuntimeError(f"Task {task_id} failed: {status}")

sleep(interval)

def download_document(document_id: str, output_path: str) -> None:

"""Download the resulting PDF by its document ID."""

response = requests.get(

f"{HOST}/pdf-services/api/documents/{document_id}/download",

headers=AUTH_HEADERS,

stream=True,

)

response.raise_for_status()

with open(output_path, "wb") as f:

for chunk in response.iter_content(chunk_size=8192):

f.write(chunk)

# Full workflow: URL to PDF

task_id = create_url_to_pdf_task("https://example.com/invoice/1042")

result = poll_task(task_id)

download_document(result["resultDocumentId"], "invoice_1042.pdf")

print("PDF generated successfully.")In this code, you define three reusable functions that map to the async workflow: create_url_to_pdf_task() submits a public URL and returns a taskId, poll_task() checks the task status in a loop until it reaches COMPLETED or FAILED, and download_document() streams the resulting PDF to disk. The final three lines wire them together into the complete conversion pipeline.

Before running: Set your

FOXIT_API_HOST,FOXIT_CLIENT_ID, andFOXIT_CLIENT_SECRETenvironment variables with the values from your Foxit Developer Dashboard. Never commit credentials to source control; use environment variables or a secrets manager.

HTML File-to-PDF Conversion

When your content isn’t publicly accessible (internal dashboards, dynamically generated reports), you can upload an HTML file directly. This follows the standard 4-step async pattern:

def upload_document(file_path: str) -> str:

"""Upload a file to Foxit. Returns a documentId."""

with open(file_path, "rb") as f:

response = requests.post(

f"{HOST}/pdf-services/api/documents/upload",

files={"file": f},

headers=AUTH_HEADERS,

)

response.raise_for_status()

return response.json()["documentId"]

def create_html_to_pdf_task(document_id: str) -> str:

"""Create an HTML-to-PDF conversion task. Returns a taskId."""

headers = {**AUTH_HEADERS, "Content-Type": "application/json"}

response = requests.post(

f"{HOST}/pdf-services/api/documents/create/pdf-from-html",

json={"documentId": document_id},

headers=headers,

)

response.raise_for_status()

return response.json()["taskId"]

# Full workflow: HTML file to PDF

doc_id = upload_document("report.html")

task_id = create_html_to_pdf_task(doc_id)

result = poll_task(task_id)

download_document(result["resultDocumentId"], "report.pdf")

print("HTML converted to PDF successfully.")In this code, you first upload a local .html file via upload_document(), which returns a documentId referencing the uploaded file on Foxit’s servers. Then create_html_to_pdf_task() submits that documentId for conversion. The rest of the workflow is identical: poll for completion, then download the result.

Note: Replace

"report.html"with the path to your own HTML file. This code reuses thepoll_task()anddownload_document()functions from the URL-to-PDF example above, so make sure both are defined in the same script.

The key difference: URL-to-PDF skips the upload step (you POST the URL directly), while HTML file conversion requires uploading the .html file first via the /documents/upload endpoint. Both use the same poll-and-download pattern after task creation.

Refer to the Foxit API documentation and the Postman workspace for the complete parameter reference, including any additional rendering options supported by these endpoints. The GitHub demo repository contains working examples in Python, Node.js, and PHP.

Controlling CSS and JavaScript Rendering in HTML-to-PDF Conversion

Regardless of which API you use for HTML-to-PDF conversion, the quality of the output depends on how well you prepare the HTML. The rendering parameters live in your document, not in API request fields.

The single most common rendering problem between “looks right in a browser” and “looks wrong in a PDF” is the CSS media type. By default, browsers render with screen styles, which means your navigation bar, sidebar, and hover states all appear. For PDF output, you want your @media print rules to take over.

Write your print styles explicitly:

@media print {

nav,

.sidebar,

.no-print {

display: none;

}

body {

font-size: 11pt;

font-family: "Inter", Arial, sans-serif;

color: #000;

}

.invoice-table {

page-break-inside: avoid;

}

.page-header {

page-break-before: always;

}

@page {

size: A4;

margin: 20mm 15mm;

}

}In this stylesheet, you hide non-essential UI elements (navigation, sidebars) when printing, set a clean body font, and use page-break-inside: avoid to prevent the renderer from splitting a table row across pages. The nested @page rule sets the paper size and margins at the CSS level, so layout configuration stays in the document rather than in API parameters.

For font rendering consistency, don’t rely on system fonts. Include a @font-face declaration in your HTML that loads from a CDN, or inline the font as base64:

<style>

@font-face {

font-family: "Inter";

src: url("https://fonts.gstatic.com/s/inter/v13/UcCO3FwrK3iLTeHuS_fvQtMwCp50KnMw2boKoduKmMEVuLyfAZ9hiJ.woff2")

format("woff2");

font-weight: 400;

font-style: normal;

}

</style>In this snippet, you embed the Inter font directly in the HTML using a @font-face declaration that points to Google Fonts. This guarantees Inter renders in the PDF regardless of what fonts are installed in the API’s container environment. The tradeoff is latency: the rendering engine fetches the font file during conversion. If you’re running high-volume batch jobs, consider inlining the font as a base64 data URI to eliminate that network round trip.

For JavaScript-heavy pages, make sure the content has fully rendered before the API captures it. If you’re using the URL-to-PDF endpoint, the API fetches and renders the live page, so your page’s JavaScript will execute. For the HTML file upload path, keep your HTML self-contained with all data already rendered in the markup rather than relying on client-side JavaScript to populate it after load.

Batch HTML-to-PDF Conversion at Scale

Sequential conversion is the naive starting point:

for invoice in invoices:

doc_id = upload_document(invoice.html_path)

task_id = create_html_to_pdf_task(doc_id)

result = poll_task(task_id)

download_document(result["resultDocumentId"], f"output/{invoice.id}.pdf")In this loop, each invoice is processed one at a time: upload, convert, poll, download, then move to the next. Each iteration blocks on the poll loop before starting the next conversion. At a few seconds per document (upload, render, poll, download), 500 invoices could take over 30 minutes.

The fix is concurrent dispatch with a semaphore to cap parallelism. Check your plan’s rate limits before setting the semaphore ceiling in production.

import asyncio

import aiohttp

import os

from pathlib import Path

HOST = os.environ["FOXIT_API_HOST"]

CLIENT_ID = os.environ["FOXIT_CLIENT_ID"]

CLIENT_SECRET = os.environ["FOXIT_CLIENT_SECRET"]

MAX_CONCURRENT = 10 # Adjust based on your plan's rate limits

async def convert_one(

session: aiohttp.ClientSession,

sem: asyncio.Semaphore,

invoice_id: str,

html_path: str,

output_dir: Path,

) -> tuple[str, bool]:

async with sem:

try:

auth = {"client_id": CLIENT_ID, "client_secret": CLIENT_SECRET}

# Step 1: Upload the HTML file

with open(html_path, "rb") as f:

form = aiohttp.FormData()

form.add_field("file", f, filename="document.html")

async with session.post(

f"{HOST}/pdf-services/api/documents/upload",

data=form,

headers=auth,

) as resp:

if resp.status != 200:

return invoice_id, False

upload_result = await resp.json()

doc_id = upload_result["documentId"]

# Step 2: Create the conversion task

async with session.post(

f"{HOST}/pdf-services/api/documents/create/pdf-from-html",

json={"documentId": doc_id},

headers={**auth, "Content-Type": "application/json"},

) as resp:

if resp.status != 200:

return invoice_id, False

task_result = await resp.json()

task_id = task_result["taskId"]

# Step 3: Poll for completion

while True:

async with session.get(

f"{HOST}/pdf-services/api/tasks/{task_id}",

headers={**auth, "Content-Type": "application/json"},

) as resp:

status = await resp.json()

if status["status"] == "COMPLETED":

result_doc_id = status["resultDocumentId"]

break

elif status["status"] == "FAILED":

print(f"Task failed for {invoice_id}")

return invoice_id, False

await asyncio.sleep(5)

# Step 4: Download the result

async with session.get(

f"{HOST}/pdf-services/api/documents/{result_doc_id}/download",

headers=auth,

) as resp:

if resp.status == 200:

pdf_bytes = await resp.read()

(output_dir / f"{invoice_id}.pdf").write_bytes(pdf_bytes)

return invoice_id, True

return invoice_id, False

except Exception as e:

print(f"Error converting {invoice_id}: {e}")

return invoice_id, False

async def batch_convert(invoices: list[dict], output_dir: str = "output") -> dict:

output_path = Path(output_dir)

output_path.mkdir(exist_ok=True)

sem = asyncio.Semaphore(MAX_CONCURRENT)

connector = aiohttp.TCPConnector(limit=MAX_CONCURRENT)

async with aiohttp.ClientSession(connector=connector) as session:

tasks = [

convert_one(session, sem, inv["id"], inv["html_path"], output_path)

for inv in invoices

]

results = await asyncio.gather(*tasks)

succeeded = [r[0] for r in results if r[1]]

failed = [r[0] for r in results if not r[1]]

return {"succeeded": len(succeeded), "failed": failed}

# Usage

invoices = [

{"id": "inv_1042", "html_path": "templates/invoice_1042.html"},

{"id": "inv_1043", "html_path": "templates/invoice_1043.html"},

# ... up to thousands of entries

]

result = asyncio.run(batch_convert(invoices))

print(f"Converted {result['succeeded']} PDFs. Failed: {result['failed']}")In this code, you use asyncio and aiohttp to process multiple HTML-to-PDF conversions concurrently. The convert_one() function runs the full 4-step workflow (upload, create task, poll, download) for a single invoice, while batch_convert() dispatches all invoices in parallel, capped by a semaphore. Results are collected via asyncio.gather() and split into succeeded and failed lists.

Before running: Set

FOXIT_API_HOST,FOXIT_CLIENT_ID, andFOXIT_CLIENT_SECRETas environment variables with your credentials from the Developer Dashboard. AdjustMAX_CONCURRENTbased on your plan’s rate limits, and update theinvoiceslist with your actual file paths.

With MAX_CONCURRENT = 10 and several seconds per conversion (including polling), the batch processes 10 documents at a time instead of one at a time. The semaphore prevents you from flooding the API with simultaneous requests and hitting the rate limit ceiling. Beyond aiohttp, no additional dependencies are needed since asyncio is part of Python’s standard library.

Credit consumption at scale: the Developer plan includes 500 credits/year. The Startup plan ($1,750/year) provides 3,500 credits. Each conversion typically costs 1 credit. For higher volumes, the Business plan ($4,500/year) includes 150,000 credits. Check your remaining credit balance via the Developer Dashboard before launching a large batch job.

For volumes beyond what a single process can handle efficiently, a queue-based architecture decouples submission from processing. Services like Amazon SQS or Redis Streams handle the message brokering:

App Server → Message Queue (SQS / Redis Streams) → Worker Pool (N workers)

Worker: upload HTML → create task → poll → download PDF → store in S3/GCS

Worker: update job status in Postgres / RedisEach worker picks a job from the queue, runs the 4-step conversion workflow, writes the resulting PDF to S3 or GCS, and updates the job status in a database. This pattern handles burst volume naturally: jobs queue up during spikes, workers drain at the rate the API allows, and your app server is never blocked waiting for conversions to complete.

Production Deployment Patterns for HTML-to-PDF Pipelines

Error Handling and Retry Logic

Not all errors warrant a retry. Map HTTP status codes to decisions before writing any retry logic.

A 400 Bad Request means your request body is malformed. Retrying the same payload returns another 400. Fix the payload, don’t retry. A 429 Too Many Requests and a 503 Service Unavailable are transient: back off and retry. A FAILED task status means the conversion itself failed (possibly due to invalid HTML or unreachable URLs); check the task response for diagnostic details.

import time

import random

import requests

from requests.exceptions import RequestException

PERMANENT_ERRORS = {400, 401, 403, 422}

TRANSIENT_ERRORS = {429, 500, 502, 503, 504}

def post_with_retry(

url: str,

max_retries: int = 4,

base_delay: float = 1.0,

**kwargs,

) -> requests.Response:

"""POST with exponential backoff and jitter for transient errors."""

for attempt in range(max_retries + 1):

try:

response = requests.post(url, timeout=60, **kwargs)

if response.status_code in range(200, 300):

return response

if response.status_code in PERMANENT_ERRORS:

raise ValueError(

f"Permanent error {response.status_code}: {response.text}"

)

if response.status_code in TRANSIENT_ERRORS:

if attempt == max_retries:

raise RuntimeError(

f"Max retries exceeded. Last status: {response.status_code}"

)

delay = base_delay * (2 ** attempt) + random.uniform(0, 0.5)

print(f"Transient error {response.status_code}. Retrying in {delay:.1f}s...")

time.sleep(delay)

except RequestException as e:

if attempt == max_retries:

raise

delay = base_delay * (2 ** attempt) + random.uniform(0, 0.5)

time.sleep(delay)

raise RuntimeError("Unexpected: exhausted retries without returning or raising")

# Usage with the URL-to-PDF endpoint

auth_headers = {

"client_id": CLIENT_ID,

"client_secret": CLIENT_SECRET,

"Content-Type": "application/json",

}

response = post_with_retry(

f"{HOST}/pdf-services/api/documents/create/pdf-from-url",

json={"url": "https://example.com/invoice/1042"},

headers=auth_headers,

)

task_id = response.json()["taskId"]In this code, you wrap every POST request in a retry loop with exponential backoff. The function distinguishes between permanent errors (like 400 or 401, which should not be retried) and transient errors (like 429 or 503, which resolve on their own). Each retry doubles the wait time and adds random jitter to avoid synchronized retry waves.

Before running: Replace

CLIENT_ID,CLIENT_SECRET, andHOSTwith your Foxit credentials and API host, or load them from environment variables as shown in the earlier examples.

The jitter (random.uniform(0, 0.5)) prevents a thundering herd where every worker wakes up and retries simultaneously after a 429 burst. Without it, plain exponential backoff still produces synchronized retry waves when all workers hit the rate limit at the same time.

Output Optimization: Compression and Linearization

After conversion, you can chain additional PDF operations using the same async pattern. Upload the resulting PDF, call the compression or linearization endpoint, poll, and download the optimized version.

For PDFs served directly in a browser, linearization enables Fast Web View, which lets the browser display page one while the rest of the file downloads:

def compress_and_linearize(input_pdf_path: str, output_path: str) -> None:

"""Compress a PDF, then linearize it for fast web viewing."""

auth = {"client_id": CLIENT_ID, "client_secret": CLIENT_SECRET}

json_headers = {**auth, "Content-Type": "application/json"}

# Upload the PDF

doc_id = upload_document(input_pdf_path)

# Compress

resp = requests.post(

f"{HOST}/pdf-services/api/documents/modify/pdf-compress",

json={"documentId": doc_id, "compressionLevel": "MEDIUM"},

headers=json_headers,

)

resp.raise_for_status()

task = poll_task(resp.json()["taskId"])

compressed_doc_id = task["resultDocumentId"]

# Linearize the compressed result (no need to re-upload; use the resultDocumentId)

resp = requests.post(

f"{HOST}/pdf-services/api/documents/optimize/pdf-linearize",

json={"documentId": compressed_doc_id},

headers=json_headers,

)

resp.raise_for_status()

task = poll_task(resp.json()["taskId"])

# Download the final optimized PDF

download_document(task["resultDocumentId"], output_path)In this code, you chain two PDF operations back-to-back. First, you upload the PDF and compress it at MEDIUM level (valid options are LOW, MEDIUM, and HIGH). Once compression completes, you pass the resultDocumentId directly into the linearization step, which avoids a second upload. The final download gives you a PDF that is both smaller and optimized for progressive loading in browsers.

Note: This function reuses

upload_document(),poll_task(), anddownload_document()from the earlier examples. Make sure those functions are defined in the same script with your credentials configured. The Foxit developer blog post on chaining PDF actions covers this pattern in detail.

Monitoring and Secret Management

Track three metrics per conversion job: latency (to detect API degradation), credit consumption per job type (to project when you’ll exhaust your plan), and failure rate by error code (to catch template regressions before they hit customers). Set an alert when remaining credits drop below 20% of your plan allocation. The Foxit Developer Dashboard exposes real-time usage data you can check before launching batch runs.

API credentials go in environment variables or a secrets manager (AWS Secrets Manager, HashiCorp Vault, GCP Secret Manager). Rotate credentials from the Developer Dashboard when team members leave or when you suspect a credential has been exposed. You can generate new credentials and revoke old ones without a service interruption if you update your environment first.

Run Your First HTML-to-PDF Conversion

Sign up for the Foxit Developer plan at no cost, no credit card, with 500 credits available immediately. Generate your client_id and client_secret from the Developer Dashboard. Clone the demo repository for working examples in Python, Node.js, and PHP, or copy the URL-to-PDF example from this guide and run it against a public page.

After your first conversion completes, check your credit usage in the Dashboard to validate your throughput estimate and cost projection for production volume. The Startup plan ($1,750/year for 3,500 credits) is self-serve with no sales call required if you need more capacity.